Achieving Cross-Session BCI Consistency: Methods for Robust Neural Decoding in Clinical and Research Applications

Brain-Computer Interfaces (BCIs) hold transformative potential for clinical diagnostics, neurorehabilitation, and cognitive monitoring.

Achieving Cross-Session BCI Consistency: Methods for Robust Neural Decoding in Clinical and Research Applications

Abstract

Brain-Computer Interfaces (BCIs) hold transformative potential for clinical diagnostics, neurorehabilitation, and cognitive monitoring. However, a significant barrier to their real-world adoption is cross-session variability—the degradation of classification performance when models are applied to EEG data recorded from the same user on different days. This article provides a comprehensive analysis for researchers and biomedical professionals on methods designed to achieve cross-session consistency. We explore the foundational causes of signal non-stationarity, detail state-of-the-art methodological solutions from feature engineering to deep domain adaptation, address key implementation challenges, and present a comparative validation of current approaches. By synthesizing insights from recent literature, this review aims to equip developers with the knowledge to build more robust, generalizable, and clinically viable BCI systems.

The Cross-Session Challenge: Understanding the Roots of BCI Variability

Defining Cross-Session Variability in EEG-based BCIs

Frequently Asked Questions (FAQs)

Q1: What is cross-session variability in EEG-based BCIs? Cross-session variability refers to the fluctuations in EEG signal characteristics recorded from the same individual across different recording sessions. This variability poses a critical challenge for Brain-Computer Interface systems, as it often results in significantly reduced classification robustness and performance degradation when models trained on data from one session are applied to data from subsequent sessions [1] [2]. This phenomenon necessitates daily calibration phases before users can effectively operate BCI systems [2].

Q2: What are the primary factors causing cross-session variability? The main contributing factors include:

- Inaccurate Electrode Positioning: Slight shifts in EEG cap placement between sessions cause the same numbered electrodes to monitor different brain regions [2].

- Psychological State Fluctuations: Factors such as task focus, fatigue, and motivation levels vary across trials and sessions, significantly altering EEG characteristics [2] [3].

- Trial-to-Trial Noise Variability: Changes in internal physiological states (e.g., eye blinks, muscle movement) and external environmental conditions (e.g., electromagnetic interference) affect signal stability [2].

Q3: How can researchers mitigate the impact of electrode shifts? The Adaptive Channel Mixing Layer (ACML) is a plug-and-play preprocessing module designed to compensate for electrode misalignments. It applies a learnable linear transformation to input EEG signals, dynamically re-weighting channels based on inter-channel correlations to enhance resilience to spatial variability. This method has demonstrated improvements in classification accuracy (up to 1.4%) and kappa scores (up to 0.018) without requiring task-specific hyperparameter tuning [2].

Q4: What hybrid feature framework improves cross-session classification? A robust framework integrates channel-wise spectral features (e.g., from Short-Time Fourier Transform) with brain connectivity features (functional and effective connectivity). This is combined with a two-stage feature selection strategy (correlation-based filtering and random forest ranking) to enhance feature relevance. Using an SVM classifier, this approach achieved high cross-session classification accuracies of 86.27% and 94.01% on two different datasets [1].

Q5: Why are connectivity features important for cross-session robustness? While spectral features capture activity within individual channels, connectivity features (such as Phase Locking Value - PLV) model inter-regional interactions in the brain. These connectivity patterns can be more stable across sessions than isolated channel features, providing a more generalized representation of brain activity that improves model generalizability under realistic, varying conditions [1].

Troubleshooting Common Experimental Issues

Issue 1: Performance Degradation in Cross-Session Validation

- Symptoms: A model demonstrates high accuracy within a single session but suffers a significant performance drop when validated on data from a new session.

- Solution: Implement a hybrid feature learning framework. Combine spectral, temporal, and brain connectivity features to create a more comprehensive representation of the neural signal. Follow this with a stringent, two-stage feature selection process to reduce dimensionality and isolate the most stable features across sessions [1].

Issue 2: Inconsistent Signals Due to Electrode Placement

- Symptoms: Signals appear distorted or noisy; classification is inconsistent despite using the same experimental paradigm.

- Solution: Integrate a spatial alignment module like the Adaptive Channel Mixing Layer (ACML) into your processing pipeline. The ACML learns to adaptively mix input channels to compensate for spatial displacement, improving signal consistency [2].

Issue 3: Low Participant Engagement or Motivation

- Symptoms: Poor data quality due to participant fatigue, anxiety, or lack of focus.

- Solution:

- Incorporate Motivating Content: Use stimuli based on the participant's personal interests (e.g., favorite topics, cartoon characters) to maintain engagement [3].

- Involve Caregivers: For children or vulnerable populations, have a trusted caregiver present to help manage expectations, provide comfort, and interpret non-verbal cues [3].

- Graded Training: Start with simple tasks (e.g., a single picture with empty quadrants) and gradually progress to the full experimental paradigm to build understanding and confidence [3].

Quantitative Performance of Cross-Session Methods

Table 1: Comparative Performance of EEG Classification Methods

| Method | Key Features | Reported Cross-Session/Subject Accuracy | Key Advantage |

|---|---|---|---|

| Hybrid Feature Learning [1] | STFT + Connectivity Features, Two-stage Feature Selection, SVM | 86.27%, 94.01% (Inter-subject) | Integrates diverse, robust feature types for improved generalizability |

| Hybrid Deep Learning (CNN-LSTM) [4] | Spatial + Temporal Feature Learning | 96.06% (Motor Imagery task) | Powerful hierarchical feature learning from raw data |

| Traditional Machine Learning (Random Forest) [4] | Hand-crafted Features (e.g., PSD) | 91% (Motor Imagery task) | Computational efficiency and strong baseline performance |

| ACML Module [2] | Learnable Spatial Transformation | Accuracy increase up to 1.4% | Explicitly mitigates electrode shift; plug-and-play |

Table 2: Essential Research Reagents & Computational Tools

| Item / Tool Name | Function / Purpose | Application Context |

|---|---|---|

| g.USBamp Amplifier [3] | High-quality signal acquisition and digitization | Multi-channel EEG recording in lab settings |

| Electro-Cap International EEG Cap [3] | Provides stable 32-channel electrode positioning | Precise sensor placement for reproducible experiments |

| Wearable Sensing VR300 (Dry Electrodes) [3] | EEG recording without conductive gel | Faster setup, suitable for home or clinical environments |

| Riemannian Geometry [4] [2] | Aligns covariance matrices of EEG data in a statistical manifold | Transfer learning to reduce inter-session variability |

| Wavelet Transform [4] | Extracts high-resolution time-frequency features | Feature extraction for non-stationary EEG signals |

| Python (with scikit-learn, MNE, PyRiemann) | Provides libraries for signal processing, ML, and domain adaptation | End-to-end pipeline development and analysis |

Detailed Experimental Protocols

Protocol 1: Implementing the Hybrid Feature Learning Framework

This protocol is designed for robust mental attention state classification across sessions [1].

Data Acquisition & Preprocessing:

- Record EEG data from participants performing defined mental tasks (e.g., focused, unfocused, drowsy).

- Apply band-pass filtering (e.g., 0.5-40 Hz) and artifact removal (e.g., using ICA) to clean the signals.

Multi-Domain Feature Extraction:

- Spectral Features: Compute the Short-Time Fourier Transform (STFT) on epoched data to get channel-wise power in standard frequency bands (Delta, Theta, Alpha, Beta, Gamma).

- Connectivity Features: Calculate functional connectivity metrics, such as Phase Locking Value (PLV) or coherence, between all channel pairs to capture inter-regional brain interactions.

Two-Stage Feature Selection:

- Stage 1 (Filtering): Remove features with very low variance or high inter-correlation to reduce redundancy.

- Stage 2 (Wrapper): Use a Random Forest classifier to rank the remaining features by importance and select the top N features for the final model.

Classification & Validation:

- Train a Support Vector Machine (SVM) classifier on the selected features from one session.

- Validate the model's performance on data from a separate session held out from training (cross-session validation).

Protocol 2: Integrating the ACML for Electrode Shift Compensation

This protocol details how to add the ACML to a neural network to improve robustness [2].

Model Architecture:

- Prepend the ACML module to any existing deep learning architecture (e.g., CNN, LSTM, or hybrid models).

ACML Forward Pass:

- Let the input EEG data be ( X \in R^{B \times T \times C} ), where ( B ) is batch size, ( T ) is time steps, and ( C ) is channels.

- The ACML performs two key operations:

- Channel Mixing: ( M = XW ), where ( W \in R^{C \times C} ) is a trainable mixing weight matrix.

- Adaptive Control: ( Y = X + M \odot c ), where ( c \in R^{C} ) is a trainable control weight vector and ( \odot ) denotes element-wise multiplication.

- The output ( Y ) is the "corrected" signal, which is then passed to the subsequent layers of the main network.

Training:

- Train the entire network (ACML + main model) end-to-end on source session data. The ACML will learn to adjust for spatial variability as part of the optimization process.

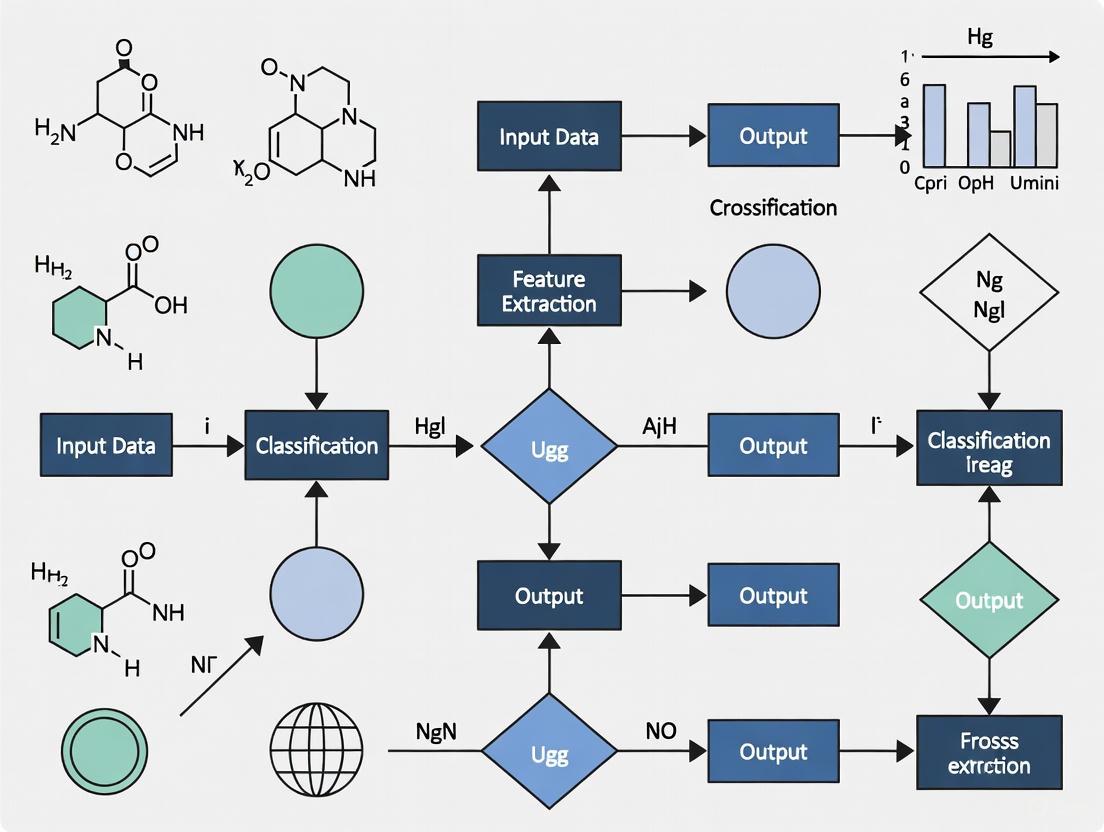

Workflow Visualization

Diagram 1: Cross-Session BCI Robustness Workflow

Diagram 2: Hybrid Feature Learning Pipeline

The Impact of Non-Stationary Neural Signals on Model Performance

Non-stationarity in neural signals refers to the statistical changes in electroencephalography (EEG) data over time, which presents a fundamental challenge for Brain-Computer Interface (BCI) systems. These signals exhibit inherent variability due to factors including neural plasticity, changes in cognitive state, electrode impedance shifts, and environmental artifacts. In cross-session BCI classification, this non-stationarity manifests as significant performance degradation when models trained on historical data fail to generalize to new sessions. Research indicates that non-stationarity can reduce classification accuracy by 10-30% in cross-session scenarios, substantially impeding the clinical deployment of reliable BCI systems [5] [6]. Addressing this challenge is crucial for developing consistent neurorehabilitation technologies and robust neural decoding pipelines for drug development research.

Troubleshooting Guide: Frequently Asked Questions

FAQ 1: Why does my model performance degrade significantly when testing on data from a different session?

Performance degradation across sessions occurs primarily due to domain shift in the data distribution. Non-stationary neural signals cause the statistical properties of EEG features to change between recording sessions, violating the fundamental assumption of independent and identically distributed data in machine learning.

Root Causes: Several factors contribute to this domain shift:

- Neural Plasticity: The brain's adaptive reorganization alters signal patterns over time [7].

- Electrode Placement Variability: Slight differences in cap placement affect signal acquisition [8].

- Changing Cognitive States: Variations in user attention, fatigue, or motivation between sessions impact signal characteristics [9].

- Environmental Artifacts: Differences in electromagnetic interference or ambient noise introduce session-specific distortions [6].

Diagnostic Checklist:

- Confirm consistent electrode impedance levels (<5 kΩ) across sessions [8]

- Verify identical experimental protocols and timing parameters

- Analyze feature distribution differences using t-SNE visualization

- Check for increased variance in session-specific data

FAQ 2: What preprocessing techniques effectively mitigate non-stationarity effects?

Advanced preprocessing pipelines can significantly reduce non-stationarity by isolating neural components from noise and artifacts.

Spatial Filtering: Utilize Common Spatial Patterns (CSP) or Riemannian geometry to enhance signal-to-noise ratio by projecting data into a space that maximizes class separability [4] [5].

Artifact Removal: Implement Independent Component Analysis (ICA) or Artifact Subspace Reconstruction (ASR) to identify and remove ocular, cardiac, and muscular artifacts [10] [11].

Domain-Invariant Preprocessing: Employ techniques like aligning spatial covariance matrices in Euclidean space to preliminarily reduce distribution discrepancies between source and target domains [5].

The diagram below illustrates a comprehensive preprocessing workflow to mitigate non-stationarity:

FAQ 3: Which machine learning approaches specifically address cross-session non-stationarity?

Both traditional and modern machine learning approaches have been developed specifically to combat non-stationarity in neural signals.

Domain Adaptation Frameworks: Siamese Deep Domain Adaptation (SDDA) incorporates Maximum Mean Discrepancy (MMD) loss to align feature distributions across sessions in Reproducing Kernel Hilbert Space, achieving 10.49% accuracy improvements in cross-session classification [5].

Hybrid Deep Learning Models: Combined CNN-LSTM architectures leverage spatial feature extraction from CNNs with temporal dependency modeling from LSTMs, achieving up to 96.06% accuracy in motor imagery classification [4].

Transfer Learning: Pre-trained models fine-tuned with session-specific data adapt general features to individual variations, with studies showing <1% accuracy loss even with reduced feature sets [11].

Brain Foundation Models (BFMs): Large-scale models pre-trained on diverse neural datasets enable few-shot generalization across sessions and participants through transfer learning [12].

FAQ 4: How can I reduce the number of electrodes without compromising cross-session performance?

Electrode reduction is achievable through strategic channel selection and signal prediction techniques.

Signal Prediction Methods: Elastic Net regression can predict full-channel (22 channels) EEG signals from a reduced set (8 central channels), maintaining 78.16% average accuracy in motor imagery classification [8].

Feature Selection Algorithms: Implement correlation-based feature selection or Random Forest ranking to identify the most informative channels, with research showing minimal accuracy loss (<1%) when using only 10 key features [11].

Channel Attention Mechanisms: Modern architectures like Multiscale Fusion enhanced Spiking Neural Networks (MFSNN) automatically weight channel importance, improving robustness with fewer electrodes [7].

Quantitative Comparison of Methodologies

Table 1: Performance Comparison of Cross-Session Classification Methods

| Method Category | Specific Technique | Reported Accuracy | Non-Stationarity Handling | Computational Efficiency |

|---|---|---|---|---|

| Traditional ML | Random Forest | 91.00% [4] | Moderate | High |

| Deep Learning | CNN-LSTM Hybrid | 96.06% [4] | High | Moderate |

| Domain Adaptation | Siamese DDA (SDDA) | +10.49% improvement [5] | Very High | Moderate |

| Signal Reconstruction | RBF Network with PSO | NRMSE: 0.0671 [10] | High | High |

| Electrode Reduction | Elastic Net Prediction | 78.16% [8] | Moderate | High |

Table 2: Feature Engineering Techniques for Non-Stationarity Mitigation

| Feature Type | Extraction Method | Advantages for Non-Stationarity | Implementation Complexity |

|---|---|---|---|

| Time-Frequency | Wavelet Transform | Captures transient signal dynamics | Moderate |

| Spatial | Riemannian Geometry | Invariant to session-specific noise | High |

| Connectivity | Functional Connectivity (PLV) | Robust to amplitude variations | Moderate |

| Multimodal | STFT + Connectivity Features | Enhances cross-session generalization [9] | High |

| Graphical | Network Topology Features | Captures relational information [11] | High |

Experimental Protocols for Cross-Session Consistency

Protocol 1: Domain Adaptation Framework Implementation

This protocol outlines the procedure for implementing a Siamese Deep Domain Adaptation (SDDA) framework to address cross-session variability [5].

Data Preparation:

- Segment data from source and target sessions using identical windowing parameters

- Apply domain-invariant spatial filtering using Common Spatial Patterns (CSP)

- Construct paired samples from both domains for Siamese network training

Model Architecture:

- Implement a twin-branch network with shared weights

- Incorporate Maximum Mean Discrepancy (MMD) loss between domains

- Add cosine-based center loss to suppress noise and outlier influence

Training Procedure:

- Train for 100-200 epochs with early stopping

- Use Adam optimizer with learning rate 0.001

- Validate on small subset of target domain data

- Fine-tune final layers on target session data

The diagram below illustrates the SDDA framework architecture:

Protocol 2: Hybrid Feature Learning for Cross-Session Robustness

This protocol details a hybrid feature learning approach that integrates multiple feature types to enhance cross-session generalization [9].

Feature Extraction Pipeline:

- Spectral Features: Compute Short-Time Fourier Transform (STFT) with 2-second windows, 50% overlap

- Functional Connectivity: Calculate Phase Locking Value (PLV) between channel pairs

- Structural Connectivity: Extract graph-based measures from brain network topology

- Time-Domain Features: Compute Hjorth parameters and statistical moments

Feature Selection:

- Apply correlation-based filtering to remove redundant features

- Implement Random Forest ranking to identify most discriminative features

- Retain top 20-30% of features based on importance scores

Model Training:

- Train SVM classifier with RBF kernel on selected feature set

- Optimize hyperparameters via cross-validation

- Validate on held-out sessions from different days

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Cross-Session BCI Research

| Resource Category | Specific Tool/Solution | Research Application | Key Benefits |

|---|---|---|---|

| Datasets | PhysioNet EEG Motor Movement/Imagery Dataset [4] | Algorithm benchmarking | Well-annotated, multi-session data |

| Software Libraries | EEGNet, ConvNet [5] | Deep learning implementation | Reproducible architectures |

| Signal Processing | Artifact Subspace Reconstruction (ASR) [11] | Real-time artifact removal | Preserves neural signals |

| Feature Extraction | Riemannian Geometry Pipeline [4] | Covariance matrix analysis | Session-invariant features |

| Domain Adaptation | MMD, CORAL algorithms [5] | Distribution alignment | Reduces session shift |

| Edge Deployment | NVIDIA Jetson TX2 [11] | Real-time inference | Low-latency processing |

Troubleshooting Guides

Cross-Session and Cross-Dataset Variability

Problem: BCI model performance decreases significantly when applied to EEG data from a different recording session or a different dataset.

Explanation: EEG signals are non-stationary and can change due to factors like user fatigue, changes in attention, or slight variations in experimental setup between sessions. This is a fundamental challenge for developing practical BCIs that do not require daily recalibration [13] [14].

Solutions:

- Implement Session Adaptation: Use cross-session adaptation (CSA) techniques. One study showed that while simple cross-session classification accuracy dropped to 53.7%, using adaptation improved accuracy to 78.9% [13].

- Apply Data Alignment: Use online pre-alignment strategies to align the EEG signal distributions of different subjects or sessions before model training and inference. This has been shown to improve the generalization ability of deep learning models across different datasets [14].

- Utilize Transfer Learning: Develop algorithms that can transfer knowledge from previous sessions or other subjects, using a small amount of new calibration data to update the model for the current session [13].

Signal Quality and Electrode Impedance

Problem: Noisy EEG signals with low signal-to-noise ratio (SNR), potentially caused by high electrode impedance.

Explanation: Electrode impedance is the opposition to alternating current flow between the electrode and the skin. High impedance can degrade common mode rejection, increasing susceptibility to environmental noise. However, the relationship is complex; for modern high-input-impedance amplifier systems, the link between low impedance and signal quality is not always straightforward [15] [16].

Solutions:

- Optimize Impedance for Your System: For traditional systems, maintain impedance below 5 kΩ to improve the signal-to-noise ratio [8]. For systems with active electrodes, follow manufacturer guidelines, as extremely low impedance may not always be advantageous [15].

- Control the Recording Environment: Record in a cool, dry environment. Warm, humid conditions can exacerbate the negative effects of high electrode impedance on low-frequency noise [16].

- Apply Signal Processing: Use spatial filtering (e.g., Common Spatial Patterns), frequency filtering (band-pass filters), and artifact rejection algorithms to clean the EEG data. Techniques like Independent Component Analysis (ICA) can help remove artifacts from blinks or muscle movement [4] [16].

Low Classification Accuracy for Complex Tasks

Problem: Difficulty in achieving high accuracy for classifying fine motor tasks, such as individual finger movements, or accounting for "no mental task" states.

Explanation: Fine motor movements like those of individual fingers generate very small amplitude signals in the EEG compared to limb movements. Furthermore, failing to include an "idle state" in the classification model can lead to a high number of false positives [17].

Solutions:

- Conduct Comprehensive Feature Selection: Do not rely on a single type of feature. Systematically extract and test features from multiple domains (time, frequency, time-frequency, and nonlinear) and use statistical feature selection methods (like mutual information or statistical significance tests) to identify the most discriminative features for your specific task [17].

- Optimize Channel Selection: Reduce the number of channels by identifying those most relevant to the task. This can decrease computational cost and improve model generalization. One study achieved notable classification of finger movements using a carefully selected subset of channels [17].

- Incorporate the Idle State: Always include a "no mental task" (NoMT) class in your training and testing data to allow the model to learn to distinguish between active mental commands and resting brain states, thereby reducing false activations [17].

Frequently Asked Questions (FAQs)

Q1: What is the single biggest source of performance drop in real-world BCI applications? The cross-session variability problem is one of the most significant challenges. EEG signals from the same user can vary substantially from day to day due to changes in cognitive state, electrode placement, and environmental factors, causing models trained on one day's data to perform poorly on another day's data [13] [14]. Robust BCI systems must be designed to adapt to this variability.

Q2: Is it always necessary to achieve very low electrode-skin impedance for high-quality EEG recordings? Not necessarily. While low impedance (e.g., below 5 kΩ) is recommended for traditional systems to maximize the signal-to-noise ratio [8], modern amplifier systems with high input impedance can tolerate higher electrode impedances. Some research using flexible neural probes even suggests that aggressively lowering impedance does not consistently improve signal quality for spike detection [15]. The key is to ensure a stable contact and follow the best practices for your specific recording equipment.

Q3: How can I improve my BCI model's performance without collecting more data from the user? Several advanced computational techniques can help:

- Data Augmentation: Use Generative Adversarial Networks (GANs) to create realistic synthetic EEG data to augment your training dataset, which can improve model robustness and generalization [4].

- Hybrid Models: Leverage hybrid deep learning architectures, such as a combination of Convolutional Neural Networks (CNN) and Long Short-Term Memory (LSTM) networks. These models can extract both spatial and temporal features from EEG data, and have been shown to achieve higher accuracy (e.g., 96.06%) than traditional machine learning or individual deep learning models [4].

- Leverage Synthetic Data: Pre-train your model on a large corpus of synthetic EEG data before fine-tuning it with a smaller set of real user data. This hybrid training approach has been shown to improve classification accuracy on real EEG data [18].

Q4: My model works well in a subject-specific setting but fails in a subject-independent setting. What can I do? This is a common problem known as cross-subject variability. To address it:

- Use Subject-Independent Features: Focus on feature domains and selection methods that are robust across individuals. Research on finger movement classification found that certain time-domain, frequency-domain, and nonlinear features, when selected via statistical significance, yielded better subject-independent results [17].

- Apply Domain Adaptation: Implement techniques like Riemannian alignment, which can help map data from different subjects into a more common feature space, making it easier to train a universal classifier [14].

Table 1: Performance Comparison of Different BCI Classification Approaches

| Classification Approach | Reported Accuracy | Key Context / Condition | Source |

|---|---|---|---|

| Hybrid CNN-LSTM Model | 96.06% | Within-session classification on PhysioNet dataset | [4] |

| Random Forest (Traditional ML) | 91.00% | Within-session classification on PhysioNet dataset | [4] |

| Cross-Session Adaptation (CSA) | 78.90% | Using adaptation techniques to improve cross-session performance | [13] |

| Within-Session (WS) Classification | 68.80% | Baseline performance on the same session | [13] |

| Signal Prediction with Reduced Channels | 78.16% | Using 8 channels to predict signals for 22 channels for MI classification | [8] |

| Cross-Session (CS) Classification | 53.70% | Performance drop when training and testing on different sessions | [13] |

| LSTM Alone | 16.13% | Demonstrating poor performance of a single, non-optimized deep learning model | [4] |

Table 2: Impact of Experimental Design on Finger Movement Classification [17]

| Analysis Type | Number of Classes | Best Accuracy | Key Condition |

|---|---|---|---|

| Subject-Dependent | 6 (5 fingers + NoMT) | 59.17% | Using mostly selected features & all channels with SVM |

| Subject-Independent | 6 (5 fingers + NoMT) | 39.30% | Using mostly selected features & channels with SVM |

Experimental Protocols

Protocol: Cross-Session Motor Imagery Experiment

This protocol outlines the methodology for collecting a dataset suitable for studying cross-session variability, as described in [13].

- Participants: Recruit 25 healthy subjects. Obtain ethical approval from an institutional review board and written informed consent from each participant.

- Session Schedule: Schedule 5 independent experimental sessions for each subject, with each session separated by 2-3 days.

- EEG Setup: Use a 32-channel EEG cap arranged according to the international 10-10 system. Ensure electrode impedance is kept below 20 kΩ. Set the sampling frequency to 250 Hz.

- Paradigm:

- Each subject sits in a chair one meter away from a monitor.

- Each trial begins with a fixation cross displayed on the screen.

- A visual cue (left or right arrow) instructs the subject to perform kinesthetic motor imagery of the corresponding hand grasping.

- The MI task duration is 4 seconds.

- Each session contains 100 trials (50 for left hand, 50 for right hand).

- Data Preprocessing:

- Remove bad EEG segments (e.g., amplitudes exceeding ±100 µV).

- Apply a 0.5–40 Hz band-pass finite impulse response (FIR) filter.

- Remove the baseline.

- Save the data in BIDS (Brain Imaging Data Structure) format for standardization.

Protocol: Evaluating Electrode Impedance on Recording Quality

This protocol is based on an in-vivo study investigating the relationship between impedance and signal quality in flexible neural probes [15].

- Probe Preparation: Use a commercial flexible polyimide neural probe.

- Impedance Control: Deposit Pt nanoparticles via electrodeposition to fabricate probes with specific target impedance levels (e.g., 50 kΩ, 250 kΩ, 500 kΩ, and 1000 kΩ at 1 kHz). Measure impedance using Electrochemical Impedance Spectroscopy (EIS).

- In-Vivo Recording:

- Anesthetize the subject (e.g., mouse) with an intraperitoneal injection of urethane.

- Secure the subject's head in a stereotaxic device and perform a craniotomy above the target brain region (e.g., the ventral posteromedial nucleus (VPM) of the thalamus).

- Insert the probe into the target region using stereotaxic coordinates.

- Connect the probe to a recording system and record neuronal signals at a high sampling rate (e.g., 30 kHz).

- Signal Analysis:

- Filter the recorded signals with a bandpass filter (e.g., 0.6–6 kHz) to isolate action potentials.

- Perform spike sorting using specialized software (e.g., MClust) to classify the recorded signals into individual neuronal units (clusters).

- Primary Metric: Use the number of well-defined, separable neural clusters as an indicator of recording quality for each impedance level.

Experimental Workflow and Signaling Diagrams

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Computational Tools for BCI Variability Research

| Tool / Material | Function / Explanation | Example Use Case |

|---|---|---|

| 32+ Channel EEG Cap (10-10 System) | High-density spatial sampling of brain activity. | Essential for capturing detailed spatial patterns in motor imagery and for effective spatial filtering [13]. |

| High-Input Impedance Amplifiers | Allows for accurate recording even with higher electrode-skin impedance. | Reduces preparation time and minimizes skin abrasion while maintaining signal quality [16]. |

| Common Spatial Patterns (CSP) | A spatial filtering algorithm that maximizes variance between two classes of EEG signals. | Standard technique for feature extraction in motor imagery BCIs, particularly for limb movement classification [14] [17]. |

| Riemannian Geometry Framework | Treats covariance matrices of EEG signals as points on a Riemannian manifold, providing a robust classification framework. | Used for creating more stable and transferable models that are less sensitive to session-to-session variations [4] [14]. |

| Wavelet Transform | A time-frequency analysis method that provides good resolution in both time and frequency domains. | Used for extracting discriminative features from non-stationary EEG signals during motor imagery tasks [4] [17]. |

| Hybrid CNN-LSTM Models | Deep learning architecture; CNNs extract spatial features, LSTMs capture temporal dependencies. | Achieving state-of-the-art classification accuracy (e.g., 96.06%) on motor imagery tasks by leveraging both spatial and temporal information [4]. |

| Generative Adversarial Networks (GANs) | A deep learning model that can generate synthetic data that mimics real EEG data. | Used for data augmentation to balance datasets and improve the generalization ability of classifiers, combating overfitting [4]. |

| Elastic Net Regression | A regularized linear regression technique that combines L1 and L2 penalties. | Used for feature selection and for predicting signals from a reduced set of electrodes, mitigating the need for high-density setups [8]. |

This technical support center provides troubleshooting guides and FAQs for researchers addressing the challenge of cross-session consistency in motor imagery-based brain-computer interface (MI-BCI) classification.

FAQs & Troubleshooting Guides

1. Why does my model's performance degrade significantly when tested on data from a different session?

This is a classic symptom of cross-session variability. EEG signals are non-stationary and can change due to factors like slight variations in electrode placement, user fatigue, or changes in brain state across days [13]. One study quantified this, showing that while within-session (WS) classification achieved up to 68.8% accuracy, standard cross-session (CS) classification degraded the accuracy to 53.7%, which was not significantly different from chance level [13]. This performance gap is the primary challenge in building robust, practical BCI systems.

2. What is the most effective strategy to recover performance in cross-session scenarios?

The most validated strategy is Cross-Session Adaptation (CSA). This involves using a small amount of data from the new session to adapt a model trained on previous sessions. Research has demonstrated that this approach can not only recover the performance loss but significantly exceed within-session accuracy, with one benchmark achieving 78.9% accuracy after adaptation [13]. Another effective method is to use a hybrid feature learning framework that integrates spectral features with functional and structural brain connectivity metrics, which has shown high robustness in cross-session classification [9].

3. How do different data processing techniques impact cross-session performance?

The interaction between processing techniques and performance is complex. For instance, applying an artifact rejection (AR) algorithm like FASTER can either enhance or degrade performance depending on the subject and the neural network architecture used [19]. Furthermore, while transfer learning generally improves performance, its benefit is more pronounced on raw data (e.g., boosting accuracy from 46.1% to 63.5%) compared to artifact-rejected data [19]. This indicates that the optimal processing pipeline is not universal and must be tailored to the specific experimental setup.

4. Beyond overall accuracy, what other metrics should I monitor for a realistic assessment?

While accuracy is crucial, a comprehensive assessment should also track consistency and generalizability. A model that performs well on one subject or session but fails on others is not practically useful. It is essential to report performance across multiple sessions and subjects. Monitoring the stability of learned features (e.g., through brain connectivity analysis [20]) can provide deeper insights into why a model generalizes well or poorly.

Quantitative Data on Performance Gaps

Table 1: Benchmarking Classification Accuracy Across Session Conditions on a Motor Imagery Dataset [13]

| Condition | Description | Average Classification Accuracy |

|---|---|---|

| Within-Session (WS) | Training and testing on data from the same session. | 68.8% |

| Cross-Session (CS) | Training on sessions from previous days and testing on a new session without adaptation. | 53.7% |

| Cross-Session Adaptation (CSA) | Using a small amount of new session data to adapt a pre-trained model. | 78.9% |

Table 2: Impact of Processing Techniques on Classification Performance [19]

| Processing Technique | Scenario | Impact on Classification Accuracy |

|---|---|---|

| Transfer Learning | Applied to unfiltered/raw EEG data. | Improved accuracy from 46.1% to 63.5%. |

| Transfer Learning | Applied after Artifact Rejection (AR). | Improved accuracy from 45.5% to 55.9%. |

| Artifact Rejection (FASTER) | Effect is highly dependent on the subject and classifier architecture. | Can either enhance or degrade performance. |

Detailed Experimental Protocols

Protocol 1: Benchmarking Cross-Session Performance

This protocol is based on the methodology used to create the public dataset and benchmarks in [13].

- Objective: To quantify the performance gap between within-session and cross-session MI classification and evaluate adaptation strategies.

- Dataset: 25 subjects, with EEG data collected over 5 independent sessions on different days. Each session includes 100 trials of left-hand and right-hand motor imagery [13].

- Preprocessing: Data were band-pass filtered (0.5–40 Hz) with a finite impulse response (FIR) filter. Bad segments were manually identified and removed [13].

- Classification Conditions:

- Within-Session (WS): Data from a single session is split into training and test sets.

- Cross-Session (CS): A model is trained on data from four sessions and tested on the held-out fifth session without any updates.

- Cross-Session Adaptation (CSA): A model pre-trained on earlier sessions is fine-tuned with a small subset of data from the test session before final evaluation [13].

- Algorithms for Benchmarking: Common Spatial Patterns (CSP), Filter Bank Common Spatial Pattern (FBCSP), EEGNet, and Shallow ConvNet were used to establish performance baselines [13].

Protocol 2: Hybrid Feature Learning for Cross-Session Robustness

This protocol is derived from the hybrid framework that achieved high cross-session accuracy [9].

- Objective: To develop a robust classification model for mental attention states that generalizes across sessions and subjects.

- Feature Extraction: A hybrid approach is employed:

- Spectral Features: Short-Time Fourier Transform (STFT) is used to extract channel-wise spectral power.

- Connectivity Features: Both functional and effective connectivity features (e.g., Phase Locking Value) are calculated to capture interactions between brain regions [9].

- Feature Selection: A two-stage strategy is applied:

- Correlation-based filtering to remove redundant features.

- Random Forest ranking to select the most discriminative features [9].

- Classification: A Support Vector Machine (SVM) is used for the final classification due to its efficiency and strong generalization performance with selected features [9].

Workflow Diagrams

Experimental Workflow for Quantifying Performance Gap

The Scientist's Toolkit

Table 3: Essential Research Reagents & Computational Tools

| Item / Solution | Function / Purpose | Example / Note |

|---|---|---|

| EEG Cap & Amplifier | Acquires raw brain electrical signals. | 32-channel Ag/AgCl electrode cap following the 10-10 system; impedance kept below 20 kΩ [13]. |

| Artifact Rejection Algorithm | Removes non-neural noise (e.g., from eye blinks, muscle movement). | FASTER algorithm or Independent Component Analysis (ICA) [19]. |

| Spatial Filtering Algorithm | Enhances signal-to-noise ratio by optimizing spatial discrimination. | Common Spatial Patterns (CSP) or Filter Bank CSP (FBCSP) [13]. |

| Connectivity Metrics | Quantifies functional interactions between different brain regions. | Weighted Phase Lag Index (WPLI) or Phase Locking Value (PLV) for building functional networks [20] [9]. |

| Feature Selection Framework | Reduces data dimensionality and selects the most discriminative features for modeling. | Two-stage strategy: correlation-based filtering followed by Random Forest ranking [9]. |

| Adaptive Learning Library | Implements algorithms that update models with new session data. | Used for Cross-Session Adaptation (CSA) to bridge the performance gap [13]. |

The Critical Need for Cross-Session Robustness in Clinical and Long-Term Studies

Technical Support Center: Troubleshooting Cross-Session BCI Classification

Frequently Asked Questions & Troubleshooting Guides

Q1: Our motor imagery classification accuracy drops significantly when applying a model trained on Day 1 to data collected from the same participant on Day 2. What is the primary cause and how can we mitigate it?

A: This is a classic cross-session non-stationarity problem. Electroencephalogram (EEG) signals are characterized by their non-stationary nature and low signal-to-noise ratio. Even for the same participant, the distribution of EEG features can exhibit significant discrepancies across different recording sessions due to factors like changes in electrode impedance, skin conductance, and the user's mental state [5].

- Recommended Solution: Implement a domain adaptation framework. Specifically, the Siamese Deep Domain Adaptation (SDDA) framework has been validated to address this. It uses a preprocessing method to create domain-invariant features and employs a Maximum Mean Discrepancy (MMD) loss to align feature distributions from different sessions in a high-dimensional space [5].

- Actionable Protocol:

- Preprocessing: Construct domain-invariant features from your source (Day 1) and target (Day 2) session data.

- Model Setup: Integrate an MMD loss function into your convolutional neural network (e.g., EEGNet or ConvNet) to reduce the distribution difference between the source and target session data in the Reproducing Kernel Hilbert Space (RKHS).

- Training: Include a cosine-based center loss during training to suppress the influence of noise and outliers on the network [5].

Q2: We are collecting a lower-limb motor imagery dataset from patients with chronic knee pain. What are the key methodological details we must document to ensure our dataset is useful for cross-session analysis?

A: Comprehensive documentation is critical for reproducible cross-session studies. The following checklist outlines the essential items to report, based on established guidelines and recent literature [21] [22]:

- Participant Demographics & Clinical Scores: Report the number of participants, age, sex, pain duration, pain intensity (e.g., Visual Analog Scale), and relevant clinical scores like the Knee injury and Osteoarthritis Outcome Score (KOOS) [22].

- Experimental Protocol: Detail the number of sessions, trials per session, time between sessions, and the precise task timing (e.g., 2s preparation, 4s imagery, 4s rest) [22] [21].

- Data Acquisition: Specify the EEG equipment, electrode model, number and locations according to the international 10-5 system, sampling frequency, and downstream processing steps [22] [21].

- Performance Metrics: Always report accuracy with confidence intervals, theoretical and empirical chance performance, and the number of trials used for training and testing [21].

Q3: How can we improve the real-time performance of a BCI system when a user's initial performance is poor?

A: Implement a mutual learning system that enables co-adaptation between the human user and the machine learning classifier.

- Solution Principle: This system embeds both human learning and machine learning. The classifier provides real-time feedback, allowing users to practice and stabilize their EEG signals for mental tasks. Concurrently, the system adapts to the user's current mental state by adjusting its parameters in real-time [23].

- Implementation Steps:

- Collect initial offline EEG data to create a pre-trained classifier.

- In the real-time phase, allow users to complete multiple trials. If performance on a trial is low, the system uses the latest EEG data to update the classifier parameters.

- The system should use a validation set from recent trials to determine the learning rate for each update, automatically reducing it if the update increases validation loss [23].

- This protocol gives users time to stabilize their EEG signals and allows the classifier to personalize, significantly improving task accuracy over time [23].

Q4: What are the proven algorithmic approaches for enhancing cross-session classification accuracy?

A: Research has demonstrated success with several advanced algorithms. The table below summarizes key methods and their reported performance gains.

Table 1: Algorithmic Performance in Cross-Session and Clinical BCI Studies

| Algorithm/ Framework | Reported Performance | Application Context | Key Advantage |

|---|---|---|---|

| Siamese Deep Domain Adaptation (SDDA) [5] | Improved accuracy by 10.49% (EEGNet) and 7.60% (ConvNet) on 4-class MI data (BCI Competition IV IIA) | Cross-session Motor Imagery (MI) classification | Reduces distribution discrepancy between sessions without needing data from other participants. |

| Mutual Learning System [23] | Increased user accuracy from 56.0% to 81.5% on MI tasks; from 55.0% to 82.5% on attention tasks. | Real-time BCI adaptation for MI and attention tasks | Enables co-adaptation, improving both user skill and classifier personalization. |

| OTFWRGD (Novel Deep Learning Algorithm) [22] | Achieved an average accuracy of 86.41% in classifying lower-limb MI in knee pain patients. | Lower-limb MI in a clinical pain population | Specifically validated on a challenging clinical dataset, showing high decoding performance. |

| k-Means Clustering Centers Difference (KMCCD) Weighting [24] | Achieved accuracy rates of 99.7% (Motor Imagery) and 99.9% (Mental Activity) on a hybrid EEG+NIRS dataset. | Hybrid BCI systems using EEG and near-infrared spectroscopy (NIRS) | A feature weighting method that significantly increases traditional classifiers' performance. |

Detailed Experimental Protocols for Key Studies

Protocol 1: Validating a Domain Adaptation Framework for Cross-Session MI

This protocol is based on the SDDA framework study [5].

- Datasets: Utilize public MI datasets from BCI Competition IV (IIA and IIB). Dataset IIA is a 4-class MI task, while IIB is a binary-class MI task with sparse channels.

- Network Architecture: Use established vanilla networks like EEGNet or ConvNet as the base classifiers.

- Framework Integration:

- Preprocessing: Apply the described method to construct domain-invariant features from the source (initial session) and target (later session) data.

- Loss Function: Modify the training loss to incorporate MMD loss for domain alignment and cosine-based center loss for noise suppression.

- Training: Train the model on the source session data while applying the SDDA framework to align it with the target session data. The framework does not require data from other participants.

- Evaluation: Compare the classification accuracy of the SDDA-enhanced network against the vanilla network on the target session data.

Protocol 2: Implementing a Mutual Learning System for Real-Time BCI

This protocol is derived from the work of Lin et al. (2023) [23].

- Participants: Recruit healthy participants, excluding those with neurological diseases.

- Session Structure: The experiment is conducted over two days.

- Day 1: Collect offline EEG data from participants performing the mental task (e.g., motor imagery or attention). Use this data to create a pre-trained classifier and familiarize the participant with the system.

- Day 2: Conduct the real-time mutual learning training.

- Mutual Learning Loop:

- The user performs a mental task trial.

- The system provides real-time feedback on performance.

- If the user's performance is low on a trial, the system acquires more EEG data to update the classifier parameters.

- The system uses the latest eight completed EEG trials as a validation set to determine the learning rate for each model update, ensuring stability.

- Outcome Measurement: The primary metric is the improvement in the user's task accuracy from the beginning to the end of the mutual learning session.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Resources for BCI Cross-Session Robustness Research

| Item / Resource | Function / Application | Specification Examples |

|---|---|---|

| Public BCI Datasets | Serves as a standard benchmark for validating new algorithms and enables direct comparison with state-of-the-art methods. | BCI Competition IV datasets IIA (4-class) & IIB (2-class) [5]. |

| Deep Learning Frameworks | Provides the foundation for building and training complex neural network models for EEG decoding and domain adaptation. | Frameworks supporting CNN architectures like EEGNet and ConvNet [5] [23]. |

| Domain Adaptation Theory | Provides the mathematical foundation for techniques that mitigate the data distribution shift between training and testing sessions. | Maximum Mean Discrepancy (MMD) for measuring distribution differences in RKHS [5]. |

| Hybrid BCI Signals | Combining multiple signal modalities can provide complementary information and improve classification robustness. | Simultaneous recording of EEG and functional Near-Infrared Spectroscopy (fNIRS) signals [24]. |

Workflow Diagrams for Core Methodologies

The following diagrams illustrate the core workflows for two primary solutions discussed in this support center.

Domain Adaptation Framework Workflow

Mutual Learning System Workflow

Building Robust Systems: Techniques for Cross-Session Generalization

Technical Support Center: Troubleshooting Guides and FAQs

This technical support center provides practical solutions for researchers working with hybrid spectral and brain connectivity features in brain-computer interface (BCI) systems, specifically within the context of cross-session classification consistency.

Frequently Asked Questions (FAQs)

Q1: Why does my hybrid BCI model performance degrade significantly across recording sessions?

Performance degradation in cross-session scenarios primarily stems from neural signal variability and non-stationarity of EEG/fNIRS data. The neural patterns that your model learns in one session may not perfectly align with those in subsequent sessions due to factors like changing electrode impedance, varying user mental states, and physiological changes [25] [6]. Implement transfer learning techniques and domain adaptation methods to maintain model consistency. The dataset from Frontiers in Neuroscience demonstrates that proper signal processing can greatly enhance cross-session BCI performance [25].

Q2: What are the most effective feature combinations for hybrid EEG-fNIRS systems targeting cross-session consistency?

Research indicates that combining non-linear features from both modalities yields robust performance. Effective features include:

- Fractal Dimension (FD) for complexity analysis

- Higher Order Spectra (HOS) for phase coupling information

- Recurrence Quantification Analysis (RQA) for dynamical system properties

- Entropy features for irregularity measurement [26]

These features, when selected using Genetic Algorithms and classified with ensemble methods, have achieved cross-session accuracy up to 95.48% in multi-subject experiments [26].

Q3: How can I synchronize data acquisition between EEG and fNIRS systems to minimize temporal artifacts?

Implement a hardware-triggered synchronization protocol with a common time-stamping mechanism. Use a master clock to generate simultaneous trigger pulses for both systems, ensuring sample-level accuracy. For post-processing synchronization, employ cross-correlation algorithms on simultaneously recorded physiological signals (e.g., cardiac rhythms) detectable by both modalities [26]. Maintain sampling rates at integer multiples to simplify resampling procedures.

Q4: What strategies reduce calibration time while maintaining cross-session classification accuracy?

Adopt collaborative BCI approaches that leverage data from multiple subjects to create more generalized models [25]. Additionally, implement feature alignment techniques such as Riemannian geometry-based approaches to map features from different sessions to a common domain. The cross-session dataset research shows that information fusion from multiple subjects significantly improves BCI performance compared to individual models [25].

Troubleshooting Guides

Problem: Declining Classification Accuracy Across Sessions

Symptoms: Model performance decreases when applied to data collected in different sessions, despite high initial accuracy.

Solution:

- Preprocessing: Apply robust re-referencing and artifact removal techniques specific to each modality

- Feature Adaptation: Implement sliding window normalization to adjust for session-specific baseline shifts

- Model Retraining: Use transfer learning with progressive fine-tuning on limited new-session data

- Ensemble Methods: Combine session-specific classifiers using stacking or voting mechanisms [26] [6]

Table: Performance Comparison of Cross-Session Adaptation Methods

| Method | Required New Data | Expected Accuracy Maintenance | Implementation Complexity |

|---|---|---|---|

| Feature Alignment | Minimal (≤5 trials) | 85-92% | Moderate |

| Transfer Learning | Moderate (10-20 trials) | 88-95% | High |

| Collaborative BCI | None (uses multi-user data) | 82-90% | Low-Moderate |

| Ensemble Classifiers | Moderate (15-25 trials) | 90-96% | High |

Problem: Inconsistent Signal Quality Between EEG and fNIRS Modalities

Symptoms: Discrepancies in signal-to-noise ratio, temporal alignment issues, or conflicting classification results between modalities.

Solution:

- Quality Assessment Protocol:

- Calculate per-modality quality indices (QIs) before fusion

- Establish minimum acceptable thresholds for each modality

- Implement quality-weighted decision fusion

- Temporal Realignment:

- Identify common physiological markers (e.g., heartbeat peaks)

- Apply dynamic time warping for precise alignment

- Validate with known stimulus-locked responses [26]

Problem: High Computational Load During Real-Time Hybrid Feature Extraction

Symptoms: System latency exceeding 200ms, dropped data packets, or inability to maintain real-time processing rates.

Solution:

- Feature Selection Optimization:

- Implement Genetic Algorithms for optimal feature subset selection

- Use incremental feature calculation to distribute processing load

- Employ sliding window with overlap to maintain temporal resolution

- Computational Efficiency:

- Utilize GPU acceleration for non-linear feature computation

- Implement feature extraction pipeline parallelization

- Apply just-in-time compilation for mathematical operations [26]

Table: Computational Requirements for Hybrid Feature Extraction

| Feature Type | Approximate Processing Time (per trial) | Recommended Hardware | Parallelization Potential |

|---|---|---|---|

| Spectral Features | 5-15ms | Multi-core CPU | High |

| Connectivity Features | 20-50ms | GPU acceleration | Moderate |

| Non-linear Features (FD, HOS, RQA) | 30-60ms | GPU acceleration | Low-Moderate |

| Feature Selection (GA) | 50-100ms (offline) | High-frequency CPU | Low |

Experimental Protocols for Cross-Session Validation

Protocol 1: Cross-Session Hybrid BCI Validation

Purpose: To evaluate the consistency of hybrid spectral and connectivity features across multiple recording sessions.

Methodology:

- Participant Recruitment: 14+ subjects with normal or corrected-to-normal vision [25]

- Session Schedule: Two sessions with average interval of ~23 days [25]

- Data Acquisition:

- EEG: 62-channel whole-head recording

- fNIRS: Prefrontal and motor cortex coverage

- Synchronized trigger markers for both modalities

- Experimental Paradigm:

- Rapid Serial Visual Presentation (RSVP) at 10Hz

- Target detection tasks with 4 targets per 100-image trial

- Three blocks with 14 trials each per session [25]

Protocol 2: Collaborative BCI for Enhanced Cross-Session Consistency

Purpose: To leverage multi-user information for improving cross-session classification performance.

Methodology:

- Group Configuration: Pair subjects into collaborative groups (2 subjects per group) [25]

- Synchronous Experiment: Both subjects perform identical target detection tasks simultaneously

- Data Fusion:

- Extract hybrid features from each subject individually

- Implement feature-level and decision-level fusion strategies

- Apply cross-subject normalization techniques

- Performance Metrics:

- Compare individual vs. collaborative classification accuracy

- Evaluate cross-session performance maintenance

- Assess information transfer rate (ITR) consistency [25]

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials for Hybrid BCI Research

| Item | Function | Specifications/Alternatives |

|---|---|---|

| EEG System | Electrical signal acquisition | 62+ channels, sampling rate ≥256Hz, compatible with fNIRS synchronization |

| fNIRS System | Hemodynamic activity monitoring | Multiple wavelengths (690nm, 830nm), coverage of relevant cortical areas |

| Synchronization Interface | Temporal alignment of modalities | Hardware trigger box with <1ms precision, common timestamping |

| Stimulus Presentation Software | Experimental paradigm delivery | Precision timing (<5ms variance), trigger output capability |

| Signal Processing Suite | Feature extraction and analysis | Non-linear feature algorithms, connectivity measures, fusion capabilities |

| Validation Dataset | Method benchmarking | Publicly available cross-session hybrid BCI data [25] |

Advanced Diagnostic Procedures

Signal Quality Assessment Protocol

Purpose: To quantitatively evaluate signal integrity across sessions and modalities.

Implementation:

- EEG Quality Metrics:

- Signal-to-noise ratio (SNR) calculation in specific frequency bands

- Artifact contamination index based on amplitude and frequency characteristics

- Channel consistency across sessions using correlation measures

- fNIRS Quality Metrics:

- Signal quality index based on physiological plausibility

- Motion artifact quantification

- Optical density validation

Cross-Session Feature Stability Analysis

Purpose: To identify which hybrid features maintain discriminative power across sessions.

Method:

- Calculate per-feature session-to-session consistency scores

- Identify stable feature subsets using intra-class correlation coefficients

- Validate with classification performance using stable vs. full feature sets

Table: Feature Stability Metrics Across Sessions

| Feature Category | Stability Metric (ICC) | Recommended Usage in Cross-Session Models |

|---|---|---|

| Spectral Power Features | 0.45-0.65 | Moderate (with adaptation) |

| Functional Connectivity | 0.35-0.55 | Low-Moderate (requires normalization) |

| Non-linear Features (Entropy) | 0.60-0.75 | High (preferred for cross-session) |

| Phase-Based Features | 0.40-0.60 | Moderate (with session-specific calibration) |

This technical support center provides essential guidance for researchers working on Brain-Computer Interface (BCI) classification and confronting the challenge of cross-domain generalization. Domain Adaptation (DA) has emerged as a powerful set of techniques to address the distribution shifts caused by inter-subject variability (subject-related variations) and intra-subject changes across recording sessions (time-related variations) [27]. Here, you will find structured troubleshooting guides, experimental protocols, and FAQs designed to help you implement DA frameworks effectively in your BCI research, particularly within the context of cross-session and cross-subject classification consistency.

Quantitative Performance Comparison of DA Methods

The table below summarizes key performance metrics from recent DA studies, providing benchmarks for your own experiments.

Table 1: Performance of Domain Adaptation Methods in BCI Classification

| DA Method | Dataset(s) Used | Domain Shift Scenario | Key Metric | Reported Performance | Citation |

|---|---|---|---|---|---|

| DDAF-CORAL | BCI Competition II III, III IVa, IV IIb | Cross-Subject | Average Accuracy | 83.3% | [28] [27] |

| DDAF-CORAL | BCI Competition II III, III IVa, IV IIb | Within-Session | Average Accuracy | 92.9% | [28] [27] |

| DDAF-CORAL | BCI Competition II III, III IVa, IV IIb | Cross-Session | Average Kappa | 0.761 | [28] [27] |

| Hybrid Feature Learning | Two cross-session EEG datasets | Cross-Session, Inter-Subject | Average Accuracy | 86.27% & 94.01% | [9] |

| ADFR | BCI Competition III IVa, IV IIb | Cross-Subject | Average Accuracy Improvement | +3.0% & +2.1% (vs. SOTA) | [29] |

| DADL-Net | BCI Competition IV 2a, OpenBMI | Intra-Subject | Accuracy | 70.42% & 73.91% | [30] |

Essential Experimental Protocols

Implementing a Deep DA Framework with Correlation Alignment (DDAF-CORAL)

This protocol is ideal for tackling distribution divergence caused by both subject-related and time-related variations [28] [27].

Workflow Overview

Step-by-Step Methodology:

Network Architecture & Input:

- Design a two-stage deep learning network for automatic feature extraction from raw EEG data

x_i^s ∈ R^(C×T)(source) andx_j^t ∈ R^(C×T)(target), whereCis the number of channels andTis the number of time samples [27]. - The network should share parameters between the source and target feature extractors to learn a common representation.

- Design a two-stage deep learning network for automatic feature extraction from raw EEG data

Correlation Alignment (CORAL) Loss:

- Compute the covariance matrices for the deep features of the source (

C_s) and target (C_t) domains. - Calculate the CORAL loss (

L_CORAL) as the squared Frobenius norm of the difference between the covariance matrices. This aligns the second-order statistics of the two distributions [28] [27]. L_CORAL = 1/(4d²) * ||C_s - C_t||_F²(wheredis the feature dimensionality).

- Compute the covariance matrices for the deep features of the source (

Joint Optimization:

- Calculate a standard classification loss (e.g., cross-entropy

L_Class) using the labeled source data. - Simultaneously optimize the network parameters by minimizing a combined loss function:

L_Total = L_Class + λ * L_CORAL, whereλis a hyperparameter that balances the two objectives [27].

- Calculate a standard classification loss (e.g., cross-entropy

Troubleshooting:

- Problem: Model fails to converge or performance is poor on both domains.

- Solution: Adjust the weighting hyperparameter

λ. Start with a small value (e.g., 0.1) and gradually increase. Ensure the learning rate is not too high.

- Solution: Adjust the weighting hyperparameter

- Problem: Overfitting to the source domain.

- Solution: Incorporate regularization techniques like dropout or batch normalization in the feature extraction network.

Label Alignment (LA) for Different Label Set DA

This protocol is crucial when your source and target domains have different label spaces, a common scenario in real-world BCI deployment [31].

Workflow Overview

Step-by-Step Methodology:

- Requirement: Obtain as few as one labeled sample from each class in the target subject's label space [31].

- Alignment: The core idea is to map the source domain's label space to align with the target domain's label space. This is often a pre-processing step and can be integrated with other DA methods [31].

- Model Training: After alignment, any standard feature extraction (e.g., xDAWN, CSP) and classification algorithm (e.g., SVM, LDA) can be applied to the aligned dataset.

Troubleshooting:

- Problem: The source domain has classes not present in the target domain, or vice versa.

- Solution: The LA approach is specifically designed for this. Use the minimal target labels to define the mapping function, effectively ignoring or re-mapping the irrelevant source classes [31].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for BCI Domain Adaptation Research

| Resource Name / Type | Primary Function | Relevance to DA in BCI |

|---|---|---|

| BCI Competition IV 2a | Public benchmark dataset for Motor Imagery (MI) | Standardized evaluation of cross-subject/model DA methods [30] [32]. |

| BCI Competition III IVa | Public benchmark dataset for Motor Imagery (MI) | Used for validating within- and cross-session DA performance [27] [29]. |

| Cross-Session RSVP Dataset | EEG dataset from collaborative target detection tasks | Facilitates development of cross-session and collaborative BCI algorithms [25]. |

| OpenBMI Dataset | Public MI-EEG dataset | Provides data for intra-subject and cross-dataset validation [30]. |

| Common Spatial Patterns (CSP) | Feature extraction algorithm for MI-EEG | Creates baseline features; often used as input for shallow DA methods [29]. |

| xDAWN | Feature extraction algorithm for ERP-based BCIs | Used to enhance the signal-to-noise ratio of ERP components like P300 [25]. |

| Maximum Mean Discrepancy (MMD) | A distance measure between distributions | Core component of many DA loss functions for aligning feature representations [29]. |

Frequently Asked Questions (FAQs)

Q1: My BCI model's performance drops drastically when applied to a new subject or even the same subject on a different day. What is the root cause?

A: This is a classic symptom of domain shift. The primary causes are:

- Subject-Related Variations: EEG signals are highly subject-specific due to differences in anatomy, neurophysiology, cognitive state (attention, stress), and other personal traits [27] [32].

- Time-Related Variations: Changes in electrode impedance, skin condition, user fatigue, and non-stationarity of the EEG signal itself lead to distribution shifts across sessions, even for the same subject [27] [9]. Your model, trained on the source domain's data distribution, fails to generalize to the target domain's shifted distribution.

Q2: When should I use MMD-based alignment versus CORAL-based alignment?

A: The choice depends on the nature of the distribution shift and your model's architecture.

- MMD is a kernel-based method that minimizes the distance between means of the source and target feature distributions in a Reproducing Kernel Hilbert Space (RKHS). It is effective for aligning general distributions but primarily focuses on first-order statistics [29].

- CORAL aligns the second-order statistics (covariance) of the feature distributions. It is particularly effective when the distribution shift is characterized by a linear transformation or when the relationship between features is critical [28] [27].

- Recommendation: For deep learning models, CORAL can be more computationally efficient and integrate seamlessly into the network. MMD is a powerful non-parametric metric. Empirical testing on your specific dataset is the best way to decide. Some modern frameworks, like ADFR, even combine them with other regularizations [29].

Q3: I have very few (or no) labeled data for a new target subject. Can I still use Domain Adaptation?

A: Yes. This scenario is known as Unsupervised Domain Adaptation (UDA). Methods like DDAF-CORAL [28] [27] and the ADFR framework [29] are designed precisely for this. They leverage the labeled source data and the unlabeled target data to learn a domain-invariant feature representation, requiring no target labels for training. If you have as few as one label per target class, you can also consider the Label Alignment approach [31] or few-shot fine-tuning.

Q4: My domain-adapted model is not performing well. What are the first things I should check?

A: Follow this structured troubleshooting guide:

- Data Preprocessing: Verify that your source and target data have been preprocessed identically (filtering, epoching, artifact removal).

- Hyperparameter Tuning: The loss weighting parameter (e.g.,

λin DDAF-CORAL) is critical. Perform a grid search over a reasonable range. - Feature Quality: Check the features being learned. Visualize them using t-SNE or PCA to see if alignment is actually occurring. If source and target features remain separate clusters, your DA loss may be too weak.

- Source Domain Quality: Ensure your source model has high performance on held-out source data. A weak source model cannot be effectively adapted.

- Algorithm Selection: Confirm you are using an appropriate DA method for your data structure (e.g., Label Alignment for different label sets [31], CORAL for covariate shift).

Transfer Learning and Subject-Specific Fine-Tuning Strategies

This technical support guide addresses common challenges in motor imagery (MI) based Brain-Computer Interface (BCI) research, specifically focusing on maintaining classification performance across multiple EEG recording sessions.

Frequently Asked Questions & Troubleshooting Guides

FAQ 1: Why does my model's performance degrade significantly when tested on a new session from the same subject, and how can I fix this?

- Problem: Cross-session performance degradation is a primary challenge in BCI research. A model trained on one session often fails on new sessions due to the non-stationary nature of EEG signals. Benchmarking results show that while within-session (WS) classification can achieve 68.8% accuracy, direct cross-session (CS) classification can plummet to near-chance levels at 53.7% [33].

- Solution: Implement Cross-Session Adaptation (CSA). Studies demonstrate that using a small amount of data from the new target session to adapt the model can significantly boost performance, with CSA achieving up to 78.9% accuracy [33]. The following workflow outlines a proven domain adaptation process.

FAQ 2: What is a practical fine-tuning strategy for deploying a model across multiple longitudinal sessions?

- Problem: A single fine-tuning step on a new session may not be optimal for long-term use over many sessions, leading to instability or "catastrophic forgetting."

- Solution: Employ a continual fine-tuning strategy that successively builds upon the most recently adapted model for each new session. Research shows this approach improves both decoder performance and stability compared to strategies that always fine-tune from the original source model [34].

- Experimental Protocol:

- Initialization: Begin with a model pre-trained on source data.

- Sequential Adaptation: For each new session

i, fine-tune the model from the previous sessioni-1using a small amount of new calibration data. - Online Test-Time Adaptation (OTTA): Complement this with OTTA to adapt the model to the evolving data distribution during deployment, enabling calibration-free operation in later sessions [34].

FAQ 3: My dataset is limited. How can I improve my model's generalization?

- Problem: Deep learning models require large, diverse datasets to perform well, which are often scarce and costly to collect in BCI research.

- Solution: Utilize a hybrid training approach with synthetic data.

- Experimental Protocol:

- Pre-training: Pre-train your model on a large dataset of synthetic EEG data generated using techniques like Generative Adversarial Networks (GANs) [4].

- Fine-tuning: Subsequently, fine-tune the pre-trained model on your smaller, real-world dataset. This method has been shown to improve accuracy and model generalization by approximately 6.89% compared to training on real data alone [18].

Performance Data & Method Comparison

The table below summarizes quantitative performance data from recent studies to help you benchmark your systems.

| Method / Model | Dataset(s) Used | Key Performance Metric(s) | Notes / Context |

|---|---|---|---|

| Hybrid CNN-LSTM [4] | PhysioNet EEG Motor Movement/Imagery Dataset | Accuracy: 96.06% | Combines spatial (CNN) and temporal (LSTM) feature extraction. |

| Ensemble RNCA (ERNCA) [35] | BCI Competition III Dataset IIIa, IVa & Real-time data | Accuracy: 97.22% (Dataset IIIa), 91.62% (Dataset IVa) | Uses channel selection and feature optimization. Effective for real-time data (93.75% accuracy). |

| Cross-Session Adaptation (CSA) [33] | 5-Session EEG Dataset (25 subjects) | Accuracy: 78.9% | Improves from 53.7% (non-adapted cross-session). Uses subject-specific models. |

| Siamese Deep Domain Adaptation (SDDA) [36] | BCI Competition IV IIA, IIB | Accuracy: 82.01% (IIA), 87.52% (IIB) | Boosts vanilla CNN performance by up to 15.2%. A universal framework. |

| EEGNet on 2-Class MI [37] | WBCIC-MI Dataset (62 subjects) | Accuracy: 85.32% (2-class), 76.90% (3-class with DeepConvNet) | Example of performance on a large, high-quality dataset. |

| Elastic Net Prediction Model [8] | Reduced-channel EEG | Accuracy: 78.16% (Range: 62.30% - 95.24%) | Uses only 8 central channels to predict a full 22-channel setup. |

The Scientist's Toolkit: Essential Research Reagents & Materials

The table below lists key computational and data resources essential for experiments in this field.

| Item Name | Function / Application in Research |

|---|---|

| Public EEG Datasets (e.g., BCI Competition IV IIA, IIB [36], PhysioNet [4]) | Standardized benchmarks for developing and validating new algorithms and models. |

| Pre-trained Deep Learning Models (e.g., EEGNet [37], ConvNet [36]) | Provide a strong baseline or starting point for transfer learning, reducing development time. |

| Domain Adaptation Frameworks (e.g., Siamese DDA [36]) | Toolboxes designed to mitigate the cross-session and cross-subject variability problem in EEG. |

| Channel Selection Algorithms (e.g., ERNCA [35]) | Identify the most relevant EEG channels for a specific task or subject, improving efficiency and accuracy. |

| Data Augmentation Tools (e.g., GANs for synthetic EEG [4]) | Generate artificial EEG data to augment small training datasets and improve model robustness. |

| Elastic Net Regression [8] | A regularization technique used for feature selection and predicting full-channel data from a few channels. |

Detailed Experimental Protocol: Subject-Specific Fine-Tuning

For researchers implementing the fine-tuning strategies discussed in FAQ 2, here is a detailed, step-by-step methodology.

Base Model Pre-training:

- Select a base architecture (e.g., EEGNet, a compact convolutional neural network) [37].

- Pre-train the model on a large and diverse source dataset, which could be a public dataset or aggregated data from multiple subjects. This model learns general features of MI tasks.

Sequential Fine-Tuning for New Sessions:

- For a new subject or a new session from a known subject, acquire a small calibration dataset (e.g., 5-10 trials per class).

- Initialization: Load the parameters from the model adapted on the previous session, not the original source model. This creates a continual learning chain [34].

- Fine-Tuning: Continue training the model on the new session's data. Use a very low learning rate to prevent catastrophic forgetting of previously learned, useful features. The goal is to gently shift the model's decision boundaries to accommodate the new session's signal distribution.

Integrating Online Test-Time Adaptation (OTTA):

- During the actual use of the BCI in a session, the model can be further adapted in real-time.

- As new, unlabeled EEG trials are classified, the model's internal layers (e.g., batch normalization statistics) can be updated to reflect the incoming data stream [34].

- This process helps the model adjust to slow drifts in the EEG signal within a single session, complementing the inter-session fine-tuning.

Novel Pre-processing Methods for Constructing Domain-Invariant Features

This technical support center provides troubleshooting guides and frequently asked questions (FAQs) for researchers developing cross-session Brain-Computer Interface (BCI) classification methods. The resources below address common experimental challenges related to constructing domain-invariant features, a core requirement for models that generalize across different EEG recording sessions.

Troubleshooting Guides

Guide 1: Addressing Low Cross-Session Classification Accuracy

Problem: Your model performs well on the training session but shows significantly degraded accuracy on test sessions from the same subject.

Background: This is a classic symptom of inter-session variability, where the distribution of EEG features shifts across recording times due to the non-stationary nature of brain signals [5].

Solution Steps: