A Practical Guide to Evolutionary Algorithms for Neuron Model Fitting in Drug Development

This article provides a comprehensive guide for researchers and drug development professionals on implementing evolutionary algorithms (EAs) for optimizing neuron model parameters.

A Practical Guide to Evolutionary Algorithms for Neuron Model Fitting in Drug Development

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on implementing evolutionary algorithms (EAs) for optimizing neuron model parameters. It covers foundational EA principles tailored to biological modeling, detailed methodologies for integrating EAs into the Model-Informed Drug Development (MIDD) pipeline, strategies for overcoming common optimization challenges, and rigorous model validation techniques. By bridging computational intelligence and neuroscience, this guide aims to enhance the efficiency and predictive accuracy of neuropharmacological research, ultimately accelerating the development of safer and more effective neurological therapies.

Evolutionary Algorithms 101: Core Principles for Biological System Modeling

Application Notes: Evolutionary Algorithms in Neuron Model Fitting

Evolutionary Algorithms (EAs) offer a powerful, gradient-free alternative to traditional optimization methods like backpropagation for fitting complex biophysical neuron models. Their population-based search strategy is particularly valuable when dealing with non-differentiable objectives, noisy fitness landscapes, and high-dimensional parameter spaces common in neuroscience research.

Performance Comparison of Optimization Algorithms

The table below summarizes quantitative performance data for evolutionary algorithms and competing methods in neuronal parameter estimation tasks.

Table 1: Performance Comparison of Neuron Model Optimization Algorithms

| Algorithm | Task | Parameters Optimized | Performance vs. Alternatives | Key Advantage | Citation |

|---|---|---|---|---|---|

| EP-GAN (Generative) | HH-model fitting for C. elegans | 175 parameters | Higher accuracy & ~1000x faster inference than DE/GA | Instant parameter generation after training | [1] |

| Evolutionary Algorithms (EAs) | Training spiking/analog networks | Architecture & weights | Matches gradient-based methods on MNIST/Atari | Gradient-free; biologically plausible | [2] |

| Differential Evolution (DE) | HH-model fitting | 22 parameters | Baseline for comparison | Robust to local minima | [1] |

| Gradient Descent (Jaxley) | Fit biophysical models to data | 19 parameters | 10x fewer simulations than GA | Efficient for differentiable systems | [3] |

Key Experimental Workflows

The application of EAs in neuroscience primarily follows two distinct workflows, each with a specific experimental objective:

Direct Parameter Optimization: This approach uses EAs to directly estimate the parameters of a pre-defined neuron model. The evolutionary algorithm searches the parameter space to find values that minimize the difference between the model's output and experimental electrophysiological recordings [1]. This is effective for fitting models to specific cell data.

Task-Driven Network Synthesis: Here, EAs are used to evolve neural network architectures and weights to perform specific computational tasks, such as classification or working memory. The fitness is defined by the network's performance on the task (e.g., accuracy, reward), allowing the discovery of effective, and sometimes biologically plausible, circuits without direct data fitting [2] [4].

Experimental Protocols

Protocol: Fitting a Hodgkin-Huxley Model using an Evolutionary Algorithm

This protocol details the steps for estimating parameters of a biophysical neuron model using an EA, based on methodologies from cited research [1].

2.1.1. Research Reagent Solutions

Table 2: Essential Materials for HH-Model Fitting

| Item Name | Function / Description | Example Sources/Tools |

|---|---|---|

| Electrophysiology Rig | Records ground-truth membrane potential responses and steady-state current profiles from the target neuron. | Patch-clamp setup; Intracellular recorder |

| Hodgkin-Huxley Model Formulation | The mathematical framework defining the structure of the biophysical model to be fitted. | Equations for sodium, potassium, and leak currents, plus any additional channel types. |

| Evolutionary Algorithm Software | Software platform implementing the core EA optimization routines (e.g., Differential Evolution). | Python (DEAP, SciPy), MATLAB |

| Neural Simulator | Software to simulate the HH-model with candidate parameters and generate output for fitness calculation. | Neuron, Brian, Jaxley, custom ODE solvers |

2.1.2. Step-by-Step Methodology

Data Acquisition and Preprocessing (Timing: 1-3 days)

- Acquire whole-cell recordings of the target neuron's membrane potential responses to a set of current injection protocols [1].

- If available, record steady-state current profiles for different voltage steps.

- Preprocess the data: filter signals to remove noise, and extract key features or full traces to be used in the fitness calculation.

Problem Formulation (Timing: 1-2 hours)

- Define the HH-model structure: Specify the number and type of ionic currents (e.g., Na+, K+, Ca2+) to be included.

- Set parameter bounds: Establish realistic minimum and maximum values for each parameter to be optimized (e.g., maximum channel conductances, time constants).

- Define the fitness function: This function quantifies the difference between the simulated and recorded data. A common choice is the mean squared error (MSE) between the simulated and recorded voltage traces. For multi-objective optimization, the function may also include error terms for fitting current profiles [1].

EA Initialization (Timing: 5 minutes)

- Set EA hyperparameters: Choose a population size (e.g., 50-100 individuals), mutation rate, crossover rate, and the number of generations.

- Initialize population: Randomly generate the initial population of candidate solutions (parameter sets) within the pre-defined bounds.

Evolutionary Optimization Loop (Timing: Hours to days, depending on model complexity) For each generation: a. Evaluation: For each candidate parameter set in the population, run a simulation of the HH-model. Calculate its fitness by comparing the simulation output to the experimental data. b. Selection: Select the best-performing individuals as parents for the next generation, based on their fitness scores. c. Variation: Apply genetic operators (crossover and mutation) to the parents to create a new generation of offspring candidate solutions. d. Termination Check: Repeat the loop until a stopping criterion is met (e.g., a maximum number of generations is reached, or the fitness improvement plateaus).

Validation and Analysis (Timing: 2-4 hours)

- Validate the best-performing parameter set by simulating the model with a novel current injection protocol (not used during training) and comparing the output to a corresponding experimental recording.

- Analyze the robustness of the solution, for example, by examining the distribution of key parameters in the final population or performing a sensitivity analysis.

Protocol: Electrophysiological Data Preprocessing for Model Fitting

High-quality data preprocessing is critical for successful model fitting. This protocol is adapted from methods used for machine learning-based neuronal classification [5].

2.2.1. Step-by-Step Methodology

Spike Sorting (Timing: 1-3 days)

- Import raw electrophysiological data files (e.g., .pl2, .plx) into specialized software like Offline Sorter [5].

- Perform automatic or manual spike sorting to isolate action potentials from individual neurons. This typically involves projecting spike waveforms into a 2D feature space and applying a clustering algorithm like K-means to assign spikes to distinct units [5].

Waveform Processing (Timing: 1-2 hours)

- For each isolated neuron, average its spike waveforms to obtain a clean, representative waveform.

- Normalize waveforms (e.g., by amplitude or to a unit vector) to standardize for downstream analysis.

Feature Extraction (for classification tasks) (Timing: 30 minutes)

- Extract characteristic features from the averaged waveform, such as peak amplitude, trough depth, spike width, and repolarization time.

- These features can be used to classify neuronal subtypes (e.g., inhibitory vs. excitatory) using machine learning tools like WaveMAP, which uses UMAP for dimensionality reduction and Louvain clustering [5].

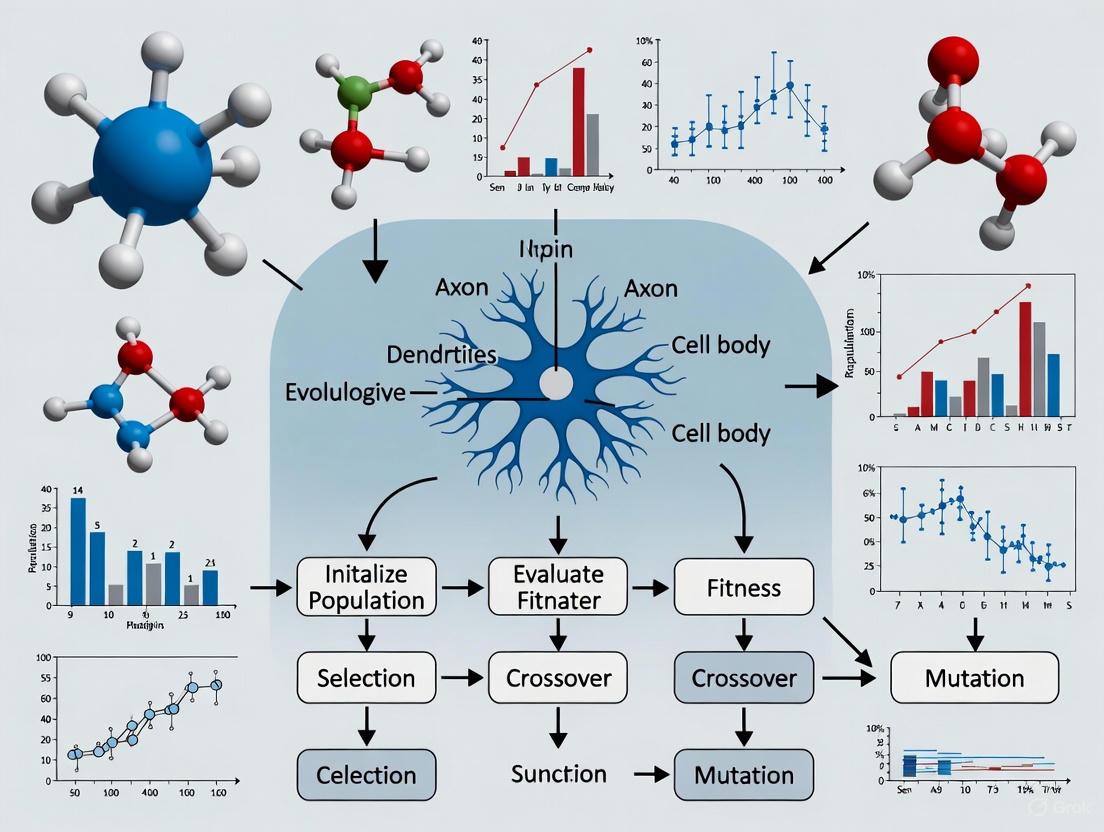

Workflow Visualization

The following diagram illustrates the logical flow of a typical evolutionary algorithm applied to neuron model fitting.

Evolutionary Algorithms (EAs) represent a family of nature-inspired optimization techniques that have demonstrated remarkable effectiveness in tackling complex scientific computing problems, particularly in domains where traditional gradient-based methods struggle. These population-based metaheuristics are especially valuable for handling high-dimensional, non-convex, noisy, or multi-modal optimization landscapes commonly encountered in scientific research. Within neuroscience and drug development, EAs have become indispensable tools for parameter estimation in complex neuronal models, a process critical for understanding neural circuitry and neurological disease mechanisms.

The adaptation of EAs for scientific applications addresses several fundamental challenges in computational biology: poorly-characterized objective function landscapes, the presence of numerous local optima, and the need to integrate diverse experimental data types. By implementing biologically-inspired operations including mutation, crossover, and selection, EAs can efficiently explore vast parameter spaces while avoiding premature convergence to suboptimal solutions. For researchers fitting biophysical neuron models, these characteristics translate to an enhanced ability to determine the ion channel distributions and membrane properties that govern neuronal electrical behavior, ultimately supporting more accurate simulations of neural network dynamics.

This article focuses on three cornerstone EA variants—Genetic Algorithms (GAs), Differential Evolution (DE), and Evolution Strategies (ES)—that have demonstrated particular utility in scientific computing applications. We present a structured analysis of their operational principles, performance characteristics, and implementation protocols tailored to the specific requirements of neuronal model fitting, providing researchers with practical guidance for deploying these powerful optimization techniques in their computational workflows.

Algorithmic Foundations and Comparative Analysis

Genetic Algorithms (GAs)

Genetic Algorithms operate on a population of candidate solutions, typically encoded as fixed-length chromosomes, through selection, crossover, and mutation operations. The fundamental GA workflow begins with population initialization, followed by iterative fitness evaluation and application of genetic operators to create successive generations. Selection mechanisms favor individuals with higher fitness—those that better solve the optimization problem—while crossover recombines genetic material from parent solutions and mutation introduces random perturbations to maintain population diversity [6].

In neuronal model fitting, GAs excel at exploring discrete and continuous parameter spaces simultaneously, making them suitable for optimizing both model topology and kinetic parameters. Their representation flexibility allows researchers to encode various model aspects, including ion channel densities, synaptic weights, and morphological parameters within a unified chromosome representation. The main advantage of GAs lies in their global search capabilities, which help identify promising regions of complex parameter spaces before fine-tuning solutions [6].

Differential Evolution (DE)

Differential Evolution specializes in optimizing continuous parameters through vector-based operations, making it particularly well-suited for kinetic parameter estimation in neuronal models. DE generates new candidate solutions by combining scaled differences between population members with existing solutions, creating a search behavior that automatically adapts to the objective function landscape. The algorithm's performance heavily depends on its mutation strategy and control parameters (mutation factor and crossover rate), which determine the evolutionary scale of the search process [7].

Recent advances in DE include the Evolutionary Scale Adaptation DE (ESADE), which introduces a successful scale estimation mechanism that utilizes feedback from trial vectors to dynamically adjust the evolutionary scale. This adaptation allows ESADE to match search requirements across different evolutionary stages, employing either small or large evolutionary steps as appropriate [7]. For neuronal model fitting, this adaptability translates to more efficient exploration of high-dimensional parameter spaces, as demonstrated by ESADE's superior performance on benchmark functions and real-world optimization problems compared to classical DE approaches [7].

Evolution Strategies (ES)

Evolution Strategies distinguish themselves through their self-adaptive mechanism for strategy parameters, particularly the mutation strengths applied to different dimensions. Modern ES variants, particularly the Covariance Matrix Adaptation ES (CMA-ES), implement a sophisticated adaptation of the covariance matrix that determines the mutation distribution, effectively learning second-order information about the objective function topology [6] [8].

The CMA-ES algorithm has emerged as a state-of-the-art approach for complex optimization problems in scientific computing due to its invariance to problem scaling and rotation. Its ability to automatically adapt the search distribution to the local objective function landscape makes it exceptionally effective for ill-conditioned problems where parameters exhibit different sensitivities and strong correlations [6] [8]. In neuronal model fitting, this capability is invaluable for handling the disparate time scales and parameter interactions characteristic of ion channel kinetics and synaptic transmission mechanisms.

Table 1: Comparative Analysis of Key Evolutionary Algorithm Variants

| Algorithm | Representation | Key Operators | Adaptation Mechanism | Strengths in Neuronal Model Fitting |

|---|---|---|---|---|

| Genetic Algorithms (GAs) | Binary, Integer, Real-valued | Selection, Crossover, Mutation | Parameter control through algorithm tuning | Flexible representation for mixed parameters; Effective global search |

| Differential Evolution (DE) | Real-valued vector | Mutation, Crossover | Evolutionary scale adaptation [7] | Efficient continuous optimization; Self-adaptive step sizes |

| Evolution Strategies (ES) | Real-valued vector | Mutation, Selection | Covariance matrix adaptation [6] | Handles parameter correlations; Invariant to problem transformations |

Application Protocols for Neuronal Model Fitting

Problem Formulation and Fitness Function Design

The foundational step in applying EAs to neuronal model fitting involves formulating an appropriate fitness function that quantifies the discrepancy between model output and experimental data. For biophysical neuronal models, this typically involves comparing simulated membrane potentials with electrophysiological recordings across multiple stimulation protocols. The fitness function often incorporates multiple objective components, including features such as action potential timing, shape characteristics, firing rates, and subthreshold dynamics [9].

A practical approach implements fitness computation through electrophysiological feature extraction, where specific characteristics of neuronal activity are quantified and compared. The fitness (F) can be represented as a weighted sum of individual feature discrepancies: F = ∑wi⋅|fisimulated - fiexperimental|, where wi represents the weight assigned to feature i based on its importance and reliability. This multi-objective formulation ensures that the optimization process captures the essential electrophysiological properties of the target neuron rather than simply minimizing point-wise differences in membrane potential [9].

Implementation Framework for High-Performance Computing

Modern neuronal model fitting with EAs demands substantial computational resources, necessitating implementation strategies that leverage high-performance computing architectures. The NeuroGPU-EA framework demonstrates an optimized approach that utilizes both CPUs and GPUs concurrently to accelerate the simulate-evaluate loop central to evolutionary optimization [9]. This implementation employs three scaling strategies to manage computational resources efficiently:

- Compute Fixed and Problem Scales: The number of neuron models in the EA population increases while computing resources remain fixed

- Strong Scaling (Compute Scales and Problem Fixed): Computing resources increase while the problem size remains constant

- Weak Scaling (Compute Scales and Problem Scales): Both computing resources and problem size increase at a fixed ratio [9]

This framework achieves a 10× performance improvement over CPU-based EA implementations by parallelizing neuronal simulations across GPU resources and efficiently distributing fitness evaluations across available computing nodes. The implementation demonstrates logarithmic cost scaling when increasing the number of stimuli used in the fitting procedure, making it practical to incorporate diverse experimental protocols that enhance model robustness and generalizability [9].

Specialized EA Variants for Sparse and High-Dimensional Problems

Large-scale sparse multi-objective optimization problems (LSSMOPs) frequently arise in neuronal modeling contexts, particularly when optimizing network connectivity or channel distributions where most parameters should be zero. Standard EAs perform undifferentiated operations on all decision variables, reducing search efficiency and producing solutions that fail to meet sparsity requirements [10]. The SparseEA algorithm addresses this challenge through a bi-level encoding strategy that represents solutions using both continuous decision variables and binary mask vectors that control parameter sparsity [10].

The enhanced SparseEA-AGDS framework introduces an adaptive genetic operator and dynamic scoring mechanism that adjusts crossover and mutation probabilities based on non-dominated layer levels of individuals. This approach updates decision variable scores iteratively, enabling superior individuals to receive increased genetic opportunities while maintaining solution sparsity [10]. For neuronal model fitting, this translates to more efficient optimization of high-dimensional parameter spaces where only a subset of parameters significantly influences model behavior, such as when determining the minimal set of ion channel types needed to reproduce specific electrophysiological phenotypes.

Table 2: Performance Comparison of EA Variants on Scientific Computing Tasks

| Algorithm | Parameter Recovery Accuracy | Computational Efficiency | Noise Resilience | Implementation Complexity |

|---|---|---|---|---|

| CMA-ES | High for correlated parameters [8] | Moderate to High [8] | Moderate | High |

| Differential Evolution | Variable (problem-dependent) [8] | High [7] | Low to Moderate | Low |

| SparseEA-AGDS | High for sparse problems [10] | High for high-dimensional problems [10] | Not Reported | Moderate |

| Genetic Algorithms | Moderate | Low to Moderate | Moderate | Low |

Experimental Protocols and Benchmarking

Protocol 1: Kinetic Parameter Estimation for Neural Models

Objective: Determine the kinetic parameters of ion channel and synaptic models that minimize the discrepancy between simulated and experimental neuronal activity.

Materials and Reagents:

- Experimental electrophysiology data: Voltage clamp, current clamp, or dynamic clamp recordings from target neurons

- Biophysical neuron model: Compartmental model implemented in simulation environments (NEURON, GENESIS, Brian)

- Computational resources: Multi-core CPUs or GPU acceleration for parallel simulation

Procedure:

- Parameter Boundary Definition: Establish physiologically plausible lower and upper bounds for each kinetic parameter based on literature and experimental constraints

- EA Initialization:

- Population size: 100-1000 individuals (increases with parameter dimension)

- Mutation strategy: DE/rand/1/bin for DE or Gaussian mutation for ES

- Crossover: Binomial for DE, simulated binary for real-coded GA

- Parallel Evaluation:

- Distribute population individuals across available computing cores

- Run neuronal simulations with identical stimulus protocols

- Extract features from simulated voltage traces (action potential properties, firing rates, etc.)

- Fitness Computation: Calculate multi-objective fitness incorporating weighted feature differences

- Evolutionary Operations: Apply selection, mutation, and crossover to generate offspring population

- Termination Check: Continue until fitness improvement falls below threshold or maximum generations reached

Validation: Assess model generalizability using novel stimulus patterns not included in the fitting process [9] [8]

Protocol 2: Benchmarking EA Performance for Specific Reaction Kinetics

Objective: Evaluate the effectiveness of different EA variants for estimating parameters of specific biochemical reaction kinetics relevant to neuronal signaling.

Experimental Design:

- Kinetic Formulations: Test across multiple reaction kinetics types:

- Generalized Mass Action (GMA)

- Michaelis-Menten kinetics

- Linear-logarithmic (Linlog) kinetics

- Noise Conditions: Evaluate performance under varying levels of measurement noise (0-20% coefficient of variation)

- Performance Metrics:

- Parameter recovery accuracy (mean squared error between true and estimated parameters)

- Computational cost (function evaluations and processing time)

- Convergence reliability (success rate across multiple independent runs)

Implementation Notes:

- For GMA kinetics, CMAES requires fewer computational resources while maintaining accuracy [8]

- For Michaelis-Menten kinetics, G3PCX achieves superior performance with substantial computational savings [8]

- Under high noise conditions, SRES and ISRES demonstrate improved reliability for GMA kinetics at higher computational cost [8]

Table 3: Essential Research Reagents and Computational Tools for EA-Based Neuronal Model Fitting

| Tool/Resource | Function | Application Notes |

|---|---|---|

| NEURON Simulation Environment [9] | Simulates electrical activity of neurons | Industry standard for compartmental modeling; GPU acceleration available |

| CoreNeuron [9] | Optimized simulator for HPC systems | Used in NeuroGPU-EA for massively parallel simulation |

| Electrophysiological Feature Extraction Libraries [9] | Quantifies spike train characteristics | Critical for fitness computation; implementations vary in computational efficiency |

| CMA-ES Implementation [8] | Advanced evolution strategy | Preferred for correlated parameter spaces; self-adaptive |

| SparseEA-AGDS Framework [10] | Handles large-scale sparse optimization | Essential for connectivity optimization and channel distribution problems |

| High-Performance Computing Cluster [9] | Provides parallel processing resources | Enables weak and strong scaling experiments; reduces fitting time from weeks to hours |

Evolutionary Algorithms provide a powerful, flexible framework for addressing the complex optimization challenges inherent in neuronal model fitting. The three primary variants discussed—Genetic Algorithms, Differential Evolution, and Evolution Strategies—each offer distinct advantages for different aspects of the parameter estimation problem. GAs provide representation flexibility, DE offers efficient continuous optimization, and ES delivers sophisticated adaptation to problem geometry. The emergence of specialized variants like ESADE and SparseEA-AGDS further extends these capabilities to handle specific challenges such as evolutionary scale adaptation and high-dimensional sparse optimization.

Future developments in EA research will likely focus on enhanced hybridization with local search methods, improved adaptation mechanisms, and tighter integration with machine learning approaches. For neuronal model fitting specifically, we anticipate increased emphasis on multi-objective optimization that simultaneously fits multiple electrophysiological phenotypes and experimental conditions. As computational resources continue to grow, EAs will play an increasingly central role in building biologically-realistic neuronal models that bridge molecular mechanisms and system-level neural computations, ultimately advancing our understanding of brain function and dysfunction.

Fitting computational models to neuronal data is a cornerstone of modern neuroscience, essential for understanding brain function and dysfunction. This process, however, is fraught with challenges due to the inherently nonlinear nature of neuronal dynamics and the high-dimensional parameter spaces of biologically realistic models. Traditional optimization methods, particularly gradient-based approaches, often struggle with these complexities, converging to suboptimal solutions or requiring extensive manual intervention. Evolutionary algorithms (EAs) have emerged as a powerful alternative, demonstrating superior performance in navigating complex error landscapes and finding near-optimal parameter sets where other methods fail. This application note details the theoretical foundations, practical protocols, and specific advantages of using EAs for neuron model fitting within a research context, providing scientists with the tools to implement these methods effectively.

Comparative Analysis of Fitting Algorithms

The choice of optimization algorithm critically impacts the success and efficiency of neuron model fitting. The table below summarizes the key characteristics of different algorithm classes.

Table 1: Comparison of Optimization Algorithms for Neuron Model Fitting

| Algorithm Class | Key Mechanism | Handling of Local Minima | Scalability to High Dimensions | Best-Suited Application Context |

|---|---|---|---|---|

| Gradient Following (GF) | Follows error gradient downhill [11] | Poor; highly susceptible [11] | Moderate; can be computationally expensive [11] | Models with smooth, convex error surfaces and good initial parameter estimates [11] |

| Evolutionary Algorithms (EA) | Population-based stochastic search [11] [2] | Excellent; avoids entrapment via global search [11] | High; effective for 100+ parameters [12] | Complex, nonlinear models with noisy data and unknown initial parameters [11] [12] |

| Markov Chain Monte Carlo (MCMC) | Bayesian sampling of posterior distribution [13] | Good; explores multiple modes [13] | Moderate; can be computationally intensive [13] | Problems requiring uncertainty quantification and full posterior analysis [13] |

| Bayesian Optimization (BO) | Builds probabilistic model of objective function [12] | Good for low-dimensional spaces | Lower; performance degrades with increasing dimensions [12] | Expensive black-box functions with small parameter sets (e.g., <20) [12] |

Quantitative evidence underscores the strengths of EAs. A study fitting a 9-parameter model of a visual neuron found that while a GF method converged rapidly, it was "highly susceptible to the effects of local minima" and produced poor fits unless initial parameters were "already very good." Conversely, the EA "found better solutions in nearly all cases" and its performance was "independent of the starting parameters" [11]. In high-dimensional settings, such as optimizing a whole-brain model with ~100 region-specific parameters, the Covariance Matrix Adaptation Evolution Strategy (CMA-ES), a sophisticated EA, significantly improved the goodness-of-fit (GoF) compared to low-dimensional scenarios [12].

Essential Research Reagents and Computational Tools

Successful implementation of EA-based fitting requires a suite of computational tools and models. The following table catalogues key "research reagents" for this domain.

Table 2: Key Research Reagent Solutions for EA-based Neuron Model Fitting

| Reagent / Tool Name | Type | Primary Function in Workflow | Example Use-Case / Notes |

|---|---|---|---|

| Hodgkin-Huxley (HH) Model [13] [14] | Biophysically Detailed Neuron Model | Serves as a high-fidelity forward model for simulating action potentials; parameters (e.g., conductance densities) are fit to data. | An 8-channel HH model was used to demonstrate parameter inference via MCMC, highlighting non-uniqueness [13]. |

| McIntyre-Richardson-Grill (MRG) Model [15] | Biophysically Detailed Axon Model | Gold-standard model for predicting myelinated peripheral nerve fiber responses to electrical stimulation. | Used as a benchmark for validating the surrogate S-MF model; provides ground-truth data for fitting [15]. |

| Surrogate Myelinated Fiber (S-MF) [15] | Simplified, GPU-Accelerated Surrogate Model | Massively parallel, efficient emulator of the MRG model; enables rapid EA-based parameter searches and optimization. | Achieved >2,000x speedup over NEURON model, enabling large-scale parameter sweeps for VNS [15]. |

| NEURON Simulation Environment [15] | Industry-Standard Neural Simulator | Platform for running biophysically realistic simulations; used to generate training data for surrogates or as a forward model. | CPU-based; the only platform supporting extracellular voltages in complex fiber models [15]. |

| AxonML Framework [15] | Computational Framework | Implements, parameterizes, and efficiently executes GPU-based models like S-MF for high-throughput simulation and optimization. | Facilitates gradient-free and gradient-based optimization of stimulation parameters [15]. |

Detailed Experimental Protocols

Protocol 1: Fitting a Filter-Based Visual Neuron Model using EA

This protocol is adapted from a study that successfully employed an EA to fit a 9-parameter model to data from 107 macaque V1 neurons [11].

Workflow Overview

Step-by-Step Procedure

Problem Formulation:

- Define the Forward Model: Implement the mathematical model of the visual neuron. In the referenced study, this was a 9-parameter, nonlinear, filter-based model.

- Choose a Fitness Metric: Select a metric to quantify the discrepancy between model output and empirical data. Common choices include:

- Sum of Squared Residuals (SSQ): A standard distance-based measure [11].

- Variance Explained (R²): The percentage of variance in the data accounted for by the model. The error term is 1 - R² [11].

- Ratio within Confidence Interval (RCI): The percentage of model outputs falling within the confidence intervals of the data points. The error term is 1 - RCI [11].

EA Configuration:

- Initialize Population: Generate a population of candidate solutions (individuals), each being a random vector of the 9 model parameters within physiologically plausible bounds.

- Set EA Hyperparameters: Define population size (e.g., 100-500), number of generations, mutation rate, crossover rate, and selection strategy (e.g., tournament selection).

Iterative Optimization:

- Evaluate Fitness: For each individual in the population, run the forward model with its parameters and calculate the fitness (error) against the target neuronal data.

- Select Parents: Probabilistically select individuals from the current population to become parents, favoring those with higher fitness (lower error).

- Create Offspring: Apply genetic operators (crossover and mutation) to the parents to generate a new population of offspring.

- Repeat: Continue the evaluate-select-create cycle for hundreds or thousands of generations until a stopping criterion is met (e.g., fitness plateaus, maximum generations reached).

Validation and Analysis:

- Solution Quality: The EA is expected to converge on a parameter set that provides a fit close to the global optimum, independent of the initial parameter guesses [11].

- Comparison: Validate the EA's performance by comparing its final fitness and the biological plausibility of the found parameters against solutions obtained from GF methods from multiple random starting points.

Protocol 2: High-Dimensional Parameter Optimization for Whole-Brain Modeling

This protocol outlines the use of CMA-ES for fitting a whole-brain model of coupled oscillators with region-specific parameters, optimizing up to 103 parameters simultaneously for 272 subjects [12].

Workflow Overview

Step-by-Step Procedure

Model and Objective Definition:

- Whole-Brain Model: Implement a dynamical whole-brain model, such as a network of coupled phase oscillators, where each brain region has one or more local parameters (e.g., coupling strength, oscillation frequency).

- Objective Function: The goal is to maximize the correlation between the simulated functional connectivity (sFC) matrix and the empirical FC (eFC) matrix derived from subject resting-state fMRI data. Fitness is typically defined as the Pearson correlation coefficient between the upper triangles of the sFC and eFC matrices.

CMA-ES Setup:

- Initialize Algorithm: Initialize the CMA-ES with a mean vector (initial guess for the parameter set) and a covariance matrix. The covariance matrix is initially set to explore a wide area of the high-dimensional parameter space.

- Parameter Bounds: Define lower and upper bounds for all parameters based on biological constraints.

High-Throughput Optimization:

- Sample Population: In each generation, sample a population of candidate parameter vectors from a multivariate normal distribution defined by the current mean and covariance.

- Parallel Evaluation: For each candidate parameter vector, run the whole-brain simulation to generate time series data, calculate the sFC, and then compute its fitness (correlation with eFC). Leverage high-performance computing resources to evaluate populations in parallel.

- Algorithm Update: The CMA-ES algorithm ranks the candidate solutions, and then updates its internal state (the mean and covariance matrix) to bias the search towards regions of higher fitness in the subsequent generation.

Post-Optimization Analysis:

- Goodness-of-Fit (GoF): The primary output is the maximized correlation between sFC and eFC. Studies report that optimization in high-dimensional spaces "improved considerably" the GoF [12].

- Parameter Reliability: Analyze the variability of the optimized parameters across multiple independent runs for the same subject. Note that parameters may show "increased variability within subjects and reduced reliability across repeated optimization runs," but the resulting sFC and GoF remain stable and reliable [12].

- Application: Use the optimized parameters or the GoF as features for downstream analyses, such as classifying subject sex or predicting behavioral measures [12].

Evolutionary algorithms represent a robust, powerful, and often necessary approach for fitting the complex, high-dimensional models that are central to modern computational neuroscience. Their ability to avoid local minima, handle nonlinearity without gradient information, and scale to problems with hundreds of parameters makes them uniquely suited for personalizing whole-brain models and inferring parameters for biophysically detailed neuron models. While the computational cost per function evaluation can be high, the advent of surrogate models and high-performance computing platforms is mitigating this limitation. By adopting the protocols and insights outlined in this application note, researchers can effectively leverage EAs to uncover the underlying principles of neural dynamics.

Quantitative Systems Pharmacology (QSP) has emerged as a powerful mechanistic modeling approach that integrates diverse biological, physiological, and pharmacological data to predict drug interactions and clinical outcomes [16] [17]. As the field matures, its applications have expanded beyond research and development into decision-making and regulatory arenas, with the FDA reporting approximately 60 QSP submissions in 2020 alone [18]. QSP establishes a conceptual, integrative framework rather than a specific computational methodology, combining elements of systems biology, systems pharmacology, systems physiology, and data science under the umbrella of dynamic systems theory [16].

The integration of Artificial Intelligence (AI) and large language models (LLMs) is now transforming QSP by enhancing model generation, interpretability, and reproducibility [19] [20]. Evolutionary Algorithms (EAs) represent a particularly promising branch of computational intelligence that can address complex optimization challenges in QSP model development, especially in parameter estimation and model calibration. This framework outlines specific protocols for aligning EA objectives with Model-Informed Drug Development (MIDD) goals to enhance QSP model qualification and regulatory acceptance.

Background

The QSP Workflow and Its Challenges

QSP model development follows a progressive maturation workflow encompassing several stages [21]. This begins with project definition and needs assessment, proceeds through biological knowledge review and model structuring, continues with mathematical formulation and parameterization, and concludes with model qualification and application [21] [19]. Throughout this process, modelers face persistent operational challenges including labor-intensive literature curation, parameter uncertainty, lack of standardized validation protocols, and long turnaround times [19].

Table 1: Key Challenges in QSP Workflow Execution

| Stage | Primary Activities | Associated Challenges |

|---|---|---|

| Project Definition | Articulation of scientific hypotheses and therapeutic endpoints | Limited biological understanding; absence of formal requirements documentation |

| Biological Knowledge Review | Systematic literature curation and pathway identification | Heterogeneous data quality; labor-intensive manual curation processes |

| Model Structure Development | Translation of biological networks to mathematical framework | Structural inconsistencies; limited reusability of existing components |

| Mathematical Formulation | Parameter identification and estimation | Parameter uncertainty; sparse experimental data for calibration |

| Model Qualification | Validation against clinical data; sensitivity analysis | Lack of standardized validation protocols; risk of overfitting |

Evolutionary Algorithms in QSP Context

Evolutionary Algorithms represent a family of population-based optimization techniques inspired by biological evolution, including selection, crossover, and mutation operations. In QSP, EAs are particularly valuable for addressing high-dimensional, non-convex optimization problems where traditional gradient-based methods struggle. Their population-based nature enables global exploration of parameter spaces while handling complex constraints commonly encountered in biological systems.

Framework for EA-QSP Integration

Conceptual Alignment

The successful integration of EAs within QSP requires careful alignment between algorithmic objectives and MIDD goals. This alignment ensures that computational efficiency translates to meaningful pharmacological insights and decision support. EA objectives must be formulated to directly address the core challenges of QSP model development, particularly parameter identifiability, validation against heterogeneous data sources, and clinical translation.

Technical Implementation Framework

The technical integration of EAs within QSP workflows requires a structured approach to algorithm selection, objective function formulation, and constraint handling. This framework leverages EAs as global optimizers for parameter estimation and model calibration across multiple data modalities and experimental conditions.

Table 2: EA Configuration for QSP Parameter Estimation

| EA Component | QSP Implementation | MIDD Alignment |

|---|---|---|

| Fitness Function | Multi-objective function balancing agreement with training data and physiological plausibility | Ensures models are both accurate and biologically interpretable for regulatory review |

| Representation | Real-valued parameter vectors with logarithmic scaling for kinetic parameters | Accommodates parameters spanning multiple orders of magnitude common in biological systems |

| Selection | Tournament selection with elitism preservation | Maintains diversity while preserving best-performing parameter sets |

| Genetic Operators | Simulated binary crossover with polynomial mutation | Enables efficient exploration of high-dimensional parameter spaces |

| Constraint Handling | Penalty functions for physiologically implausible parameter regions | Ensures parameter estimates remain within biologically meaningful ranges |

Experimental Protocols

Protocol 1: EA-Driven QSP Model Calibration

Purpose: To establish a robust methodology for calibrating QSP models using evolutionary algorithms that ensures parameter identifiability and physiological plausibility.

Materials and Reagents:

- Biological system data (e.g., pharmacokinetic, pharmacodynamic, biomarker data)

- Computational resources (multi-core processors, high-performance computing cluster)

- Software platforms (MATLAB, R, Python with DEAP or similar EA libraries)

- QSP model structure (ordinary differential equations, algebraic equations)

Procedure:

- Problem Formulation:

- Define the parameter estimation problem with identified decision variables

- Establish parameter bounds based on physiological constraints and prior knowledge

- Formulate multi-objective fitness function incorporating weighted data agreement metrics

EA Configuration:

- Initialize population of candidate parameter vectors using Latin Hypercube Sampling

- Set algorithm parameters: population size (100-500), generations (1000-5000), crossover (0.8-0.95) and mutation rates (1/n, where n=number of parameters)

- Implement niching or crowding mechanisms to maintain population diversity

Optimization Execution:

- Execute EA optimization with parallel fitness evaluation

- Implement termination criteria based on convergence metrics or maximum generations

- Store complete optimization trajectory for post-hoc analysis

Validation and Analysis:

- Perform profile likelihood analysis on optimized parameter sets

- Conduct global sensitivity analysis using Sobol or Morris methods

- Validate model predictions against held-out experimental data

Expected Outcomes: A calibrated QSP model with quantified parameter uncertainty, suitable for predictive simulations and regulatory submission support.

Protocol 2: EA-Enhanced Virtual Population Generation

Purpose: To generate clinically plausible virtual patient populations that capture inter-individual variability using evolutionary algorithms.

Materials and Reagents:

- Population-level clinical data

- Covariate distribution information

- QSP model with identified sources of variability

- Statistical software for distribution fitting

Procedure:

- Variability Source Identification:

- Determine which model parameters contribute to inter-individual variability

- Establish correlation structure between parameters based on physiological knowledge

- Define target distributions for clinically observable biomarkers

EA Optimization Setup:

- Formulate fitness function measuring distance between virtual population statistics and clinical data summaries

- Define decision variables as parameters of multivariate distributions for model parameters

- Set constraints to maintain physiological plausibility of generated individuals

Population Evolution:

- Initialize candidate distribution parameters

- Iteratively refine distribution parameters using EA optimization

- Apply niching techniques to capture multiple subpopulations if present

Virtual Population Validation:

- Assess reproduction of target clinical statistics

- Verify preservation of parameter correlations

- Confirm coverage of clinically observed subpopulations

Expected Outcomes: A virtual population that accurately reflects clinical variability for use in clinical trial simulations and dose regimen optimization.

The Scientist's Toolkit

Table 3: Research Reagent Solutions for EA-QSP Integration

| Tool Category | Specific Tools | Function in EA-QSP Workflow |

|---|---|---|

| Optimization Frameworks | DEAP (Python), MATLAB Global Optimization Toolbox, R GA Package | Provide evolutionary algorithm implementations for parameter estimation and optimization |

| Modeling & Simulation | MATLAB/SimBiology, R/xQSP, Julia/SciML | Enable QSP model development, simulation, and parameter sensitivity analysis |

| Data Curation & Integration | AI-augmented platforms (QSP-Copilot) [19], Natural language processing tools | Accelerate literature curation and data extraction from heterogeneous sources |

| High-Performance Computing | AWS, Azure, Google Cloud, SLURM clusters | Enable parallel fitness evaluation and computationally intensive EA runs |

| Visualization & Analysis | MATLAB Plotting, R/ggplot2, Python/Matplotlib | Facilitate visualization of optimization trajectories and model performance |

| Model Qualification | Profile Likelihood Implementation, Sobol Sensitivity Analysis | Support model validation and identifiability analysis for regulatory submissions |

Application Case Study: CRISPR-Cas Therapy Translation

To demonstrate the practical implementation of this framework, we applied EA-driven QSP modeling to the translation of in-vivo CRISPR-Cas therapy [22]. This novel therapeutic modality involves complex pharmacokinetic/pharmacodynamic relationships spanning multiple biological scales.

Implementation: We developed a QSP model incorporating mechanisms post-IV injection including LNP binding to opsonins, phagocytosis, cellular internalization, mRNA translation, and gene editing. Evolutionary algorithms were employed to estimate key parameters including the rate of internalization in the interstitial layer (0.039 1/h in NHP vs. 0.007 1/h in humans) and the rate of exocytosis (6.84 1/h in mouse, 2690 1/h in NHP, and 775 1/h in humans) [22].

Results: The EA-optimized model successfully characterized biodistribution and dose-exposure relationships across species, demonstrating the framework's utility in facilitating the discovery and development of novel therapeutic agents. Monte Carlo simulations using the calibrated model accurately predicted serum TTR reduction in patients, supporting first-in-human dose selection.

The integration of evolutionary algorithms within Quantitative Systems Pharmacology represents a powerful approach to addressing key challenges in model development and qualification. By formally aligning EA objectives with MIDD goals, this framework enhances the efficiency, robustness, and regulatory acceptance of QSP models. The provided protocols and toolkit offer practical guidance for implementation across various therapeutic areas, potentially reducing model development time by approximately 40% through automation of routine tasks [19]. As QSP continues to evolve as a critical component of model-informed drug development, evolutionary algorithms will play an increasingly important role in harnessing the full potential of these complex mechanistic models to advance therapeutic development.

Building Your EA Pipeline: A Step-by-Step Protocol for Neuron Model Optimization

In computational neuroscience, the fitness function serves as the crucial bridge between a neurobiological hypothesis and a functional, optimized model. It is the mathematical embodiment of the research question, guiding evolutionary algorithms (EAs) to evolve in-silico neurons that replicate empirical observations. A well-formulated fitness function ensures that the evolutionary search explores parameter spaces that are not only computationally optimal but also neurobiologically plausible. This protocol outlines the principles and procedures for constructing such fitness functions, enabling researchers to effectively translate complex neurobiological concepts into quantifiable optimization targets for neuron model fitting.

Core Components of a Fitness Function for Neuron Model Fitting

A fitness function for biophysical neuron model fitting typically integrates multiple components to ensure the model accurately reproduces empirical electrophysiological data. The structure balances several competing objectives to achieve biological realism.

Table 1: Core Components of a Fitness Function for Neuron Model Fitting

| Component Category | Specific Metric | Neurobiological Interpretation | Mathematical Formulation Examples |

|---|---|---|---|

| Distance-Based Measures | Sum of Squared Residuals (SSQ) / Root-Mean-Squared (RMS) Error | Quantifies overall deviation of model output from experimental voltage traces. [11] | SSQ = Σ(Experimental_V - Model_V)² |

| Mean Squared Error (MSE) | Average squared difference, independent of data point number. [11] | MSE = SSQ / N (N = number of data points) |

|

| Correlation-Based Measures | Variance Explained (R²) | Percentage of variance in experimental data accounted for by the model; sensitive to response shape. [11] | R² = 1 - [SSQ / Σ(Experimental_V - Mean_V)²] |

| Criteria-Based Measures | Ratio within Confidence Intervals (RCI) | Proportion of model outputs falling within experimental confidence intervals; intuitively interpretable. [11] | RCI = (Count[Model_V within CI] / N); Error = 1 - RCI |

| Biophysical Constraints | Channel Kinetics & Properties | Ensures inferred ion channel parameters (e.g., conductance, kinetics) align with prior biological knowledge. [1] | Penalty terms for parameters outside physiologically plausible ranges. |

Experimental Protocols for Fitness Function Formulation

Protocol: Multi-Objective Formulation and Calibration

Objective: To construct a composite fitness function that robustly balances multiple, potentially competing, error measures for effective evolutionary search.

- Identify Primary Data Features: Determine the key electrophysiological features essential for testing your hypothesis (e.g., resting membrane potential, spike rate, spike width, adaptation index, sag amplitude, rebound depolarization).

- Select Error Metrics: Choose a primary error metric (e.g., SSQ for overall fit) and one or two secondary metrics (e.g., R² for shape, RCI for confidence) from Table 1. [11]

- Define Sub-Objectives: Formulate the optimization as a multi-objective problem, aiming to minimize all selected error metrics simultaneously.

- Weight Assignment (if scalarizing): If using a weighted sum approach, assign initial weights heuristically based on feature importance. For example:

- Weight for spike timing error: 0.6

- Weight for spike height error: 0.3

- Weight for resting potential error: 0.1

- Iterative Calibration: Execute the EA with the initial weights. Analyze the resulting models. If a specific feature is not fitting well, incrementally increase its weight and re-run. Utilize algorithms like the Indicator-Based Evolutionary Algorithm (IBEA) capable of handling multiple objectives without manual weight tuning. [9]

Protocol: Handling Noisy Electrophysiological Data

Objective: To design a fitness function that is robust to inherent biological variability and measurement noise, preventing overfitting.

- Replicate Experimental Context: Incorporate multiple stimulus protocols (e.g., step currents, ramps, noise injections) into the fitness evaluation, as variability across stimuli can help the EA discern correct underlying parameters. [9]

- Utilize Feature-Based Fitness: Instead of relying solely on point-by-point voltage comparisons (e.g., SSQ), extract and compare specific features from the voltage trace. This approach is less sensitive to high-frequency noise.

- Algorithm Selection for Noise: For problems with significant measurement noise, consider evolutionary strategies (ES) known for noise resilience, such as SRES (Stochastic Ranking Evolutionary Strategy) or ISRES (Improved SRES), as they can perform more reliably than other algorithms like CMA-ES in these conditions. [8]

- Regularization: Add penalty terms to the fitness function that discourage overly complex solutions or parameters that deviate far from known biological ranges, thus constraining the search to physiologically plausible models. [11] [1]

Optimization Methods and Algorithm Selection

Selecting an appropriate evolutionary algorithm is critical, as performance varies significantly with problem structure, dimensionality, and the presence of noise.

Table 2: Evolutionary Algorithm Performance for Neuron Model Fitting

| Algorithm | Best Suited For | Performance & Characteristics | Considerations |

|---|---|---|---|

| CMA-ES (Covariance Matrix Adaptation Evolution Strategy) | Low-noise problems; GMA and Linlog kinetics. [8] | High speed; requires a fraction of the computational cost of others in low-noise conditions. [8] | Performance can degrade with increasing measurement noise. [8] |

| SRES/ISRES (Stochastic Ranking ES) | Noisy data; GMA kinetics. [8] | Reliable performance under marked measurement noise. [8] | Higher computational cost compared to CMA-ES. [8] |

| G3PCX (Generalized Generation Gap) | Michaelis-Menten kinetics. [8] | Highly efficacious for parameter estimation; achieves numerous folds saving in computational cost. [8] | Performance may be formulation-specific. [8] |

| NeuroGPU-EA | High-dimensional parameter spaces; scalable fitting. | Leverages GPU parallelism; 10x faster than CPU-based EA on scaling benchmarks. [9] | Requires access to HPC resources with GPUs. [9] |

| Differential Evolution (DE) | General parameter estimation, but with limitations. | Used for estimating HH-model parameters from whole-cell recordings. [1] | Can show poor performance in some comparative studies, leading to its exclusion. [8] [1] |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Computational Tools

| Tool Name | Type/Category | Function in the Workflow |

|---|---|---|

| NEURON | Simulation Environment | Industry-standard software for simulating the electrical activity of neurons with complex morphologies and biophysics. [9] |

| CoreNeuron | GPU-Accelerated Simulator | Optimized version of NEURON for high-performance computing, enabling faster simulation on GPU nodes. [9] |

| ElectroPhysiomeGAN (EP-GAN) | Deep Generative Model | Instantly generates HH-model parameters from electrophysiological recordings, bypassing iterative optimization after training. [1] |

| IBEA (Indicator-Based Evolutionary Algorithm) | Optimization Algorithm | A multi-objective evolutionary algorithm used to find optimal trade-offs between multiple electrophysiological score functions. [9] |

| NeuroGPU-EA | Optimization Framework | A highly parallel evolutionary algorithm implementation designed for efficient model fitting on GPU-based supercomputers. [9] |

Workflow Visualization and Logical Pathways

The following diagram illustrates the end-to-end workflow for formulating a fitness function and applying an evolutionary algorithm to fit a neuron model.

Workflow for Evolutionary Neuron Model Fitting.

Translating a neurobiological hypothesis into an effective fitness function is a foundational step in evolutionary neuron model fitting. This process requires careful selection and combination of error metrics, informed calibration against experimental data, and the choice of an evolutionary algorithm suited to the problem's specific challenges. By adhering to the protocols and utilizing the tools outlined in this document, researchers can construct robust optimization frameworks that yield biophysically accurate and computationally efficient models, thereby providing deeper insights into neural function.

The accurate fitting of biophysical neuron models to experimental data is a cornerstone of computational neuroscience, enabling researchers to investigate the relationship between ion channel dynamics and neural function. Central to this optimization process is the evolutionary algorithm (EA), a prevalent method for navigating the high-dimensional parameter space of conductance-based models [23]. The performance and biological fidelity of these algorithms are critically dependent on the design of the fitness function, which quantifies the discrepancy between model output and empirical data. A well-constructed fitness function must achieve two primary objectives: it must faithfully incorporate a spectrum of electrophysiological features extracted from experimental recordings, and it must constrain the solution space to biologically plausible parameter sets. This application note details protocols for designing such fitness functions, structured to support the development of EAs for neuron model fitting. We provide a quantitative framework for feature selection, methodologies for multi-objective optimization, and strategies to embed biological constraints, thereby guiding researchers toward the creation of robust, generalizable, and physiologically relevant neuron models.

Core Principles of Fitness Function Design

The Multi-Objective Optimization Problem

Defining the fitness function for neuron model fitting is fundamentally a multi-objective optimization (MOO) problem [23]. The goal is to find parameter sets that present optimal trade-offs between multiple, often competing, electrophysiological objectives. A single neuron model can be evaluated against numerous features of its voltage trace, such as spike rate, latency, and adaptation. The EA searches for solutions that minimize a composite error across all these target features. This is frequently formulated using frameworks like the Indicator-Based Evolutionary Algorithm (IBEA), which allows for the simultaneous optimization of multiple criteria without collapsing them into a single, potentially misleading, scalar value [23] [24].

A significant challenge in this domain is the issue of degeneracy, where neurons with substantially different combinations of parameters can produce qualitatively similar electrophysiological responses [23] [1]. This implies that the mapping from parameter space to output space is not one-to-one. Consequently, a fitness function that focuses on a narrow set of features may find a solution that matches the target data but is biologically implausible. Therefore, the fitness function must be designed to navigate this degenerate landscape by incorporating a sufficiently diverse set of features and, where possible, including constraints that penalize parameter combinations falling outside physiologically realistic bounds.

From Localist to Distributed Representations

The evolution of neural network models in cognitive science offers a valuable analogy for fitness function design. Early localist models assigned a single cognitive element (e.g., a specific memory) to a single artificial neuron, a approach that is biologically unrealistic and does not account for distributed processing [25]. Similarly, a fitness function that relies on a single metric, such as overall spike count error, is often inadequate.

Modern approaches favor distributed representations, akin to those in auto-associative or attractor networks, where information is encoded across a population of neurons [25]. Translated to fitness function design, this means that the model's quality should be evaluated based on a distributed set of features that collectively define the neuron's electrophysiological identity. This approach ensures that the model captures the essence of the neural dynamics rather than merely matching one isolated aspect of its behavior. A multimodal optimizer, which explores a diverse population of model configurations for a single complex objective function, is an excellent tool for this purpose, as it directly embraces the distributed nature of the solution space [24].

Quantitative Electrophysiological Features for Fitness Evaluation

The accuracy of a fitted model is determined by how well it reproduces key electrophysiological features. The table below catalyses essential features that should be quantified from both experimental data and model simulations to compute the fitness score.

Table 1: Key Electrophysiological Features for Fitness Function Design

| Feature Category | Specific Feature | Biological Significance | Typical Scoring Function |

|---|---|---|---|

| Spike Train Characteristics | Firing Rate / I-F Curve | Neuronal excitability and input-output relationship [24] | Mean Squared Error (MSE) or Normalized Absolute Difference |

| Spike Latency (to first spike) | Timing precision and initial channel activation [24] | Absolute difference | |

| Interspike Intervals (ISI) | Spike frequency adaptation and bursting behavior [26] | Coefficient of variation or ISI histogram distance | |

| Action Potential Properties | Action Potential Amplitude | Na+ and K+ channel dynamics [26] | Absolute difference or MSE |

| Action Potential Half-Width | Spike duration and K+ channel kinetics [26] | Absolute difference or MSE | |

| After-Hyperpolarization (AHP) Depth | K+ channel-mediated hyperpolarization [26] | Absolute difference | |

| Subthreshold Dynamics | Resting Membrane Potential | Baseline ionic balance [27] | Absolute difference |

| Input Resistance | Passive membrane properties [27] | Absolute difference or normalized error | |

| Membrane Time Constant | Passive temporal integration [27] | Absolute difference | |

| Complex Patterns | Spike Frequency Adaptation | Ca2+-dependent K+ channel activity [24] | Exponential fit parameter comparison |

| Theta-Frequency Resonance (5-12 Hz) | Subthreshold resonance, important for information transmission [24] | Impedance amplitude profile (ZAP) response error |

The combination of these features into a single fitness function can be achieved through a weighted sum or a formal multi-objective optimization approach. The choice of features and their relative weights should be guided by the specific neuron type and the research questions being addressed.

Protocols for Fitness Function Implementation and Validation

Protocol: Constructing a Multi-Feature Fitness Function

This protocol outlines the steps for creating a comprehensive fitness function for optimizing a cerebellar granule cell (GrC) model, based on established methodologies [24].

Data Preparation and Feature Extraction:

- Stimulus Protocols: Apply a series of current injections to the biological neuron and the model. These should include:

- Feature Quantification: From the recorded and simulated voltage traces, extract the features listed in Table 1. For example, from step current responses, calculate the spike rate for each intensity to form the I-F curve, and measure the latency to the first spike.

Fitness Score Calculation:

- For each extracted feature ( i ), compute a normalized error ( Ei ) between the model output (( Mi )) and experimental target (( Ti )). A common method is the normalized absolute difference: ( Ei = |Mi - Ti| / |T_i| ).

- Aggregate Error: Combine the individual errors into a single fitness score (( F )) using a weighted sum: ( F = \sum{i=1}^{n} wi \cdot Ei ) where ( wi ) is the weight assigned to feature ( i ), reflecting its relative importance. The optimization aims to minimize ( F ).

- Alternative - Multi-Objective Formulation: Instead of a weighted sum, use a multi-objective EA (e.g., IBEA or NSGA-II) to treat each ( E_i ) as a separate objective. This yields a Pareto front of solutions representing optimal trade-offs between all features [23] [24].

Incorporating Biological Constraints:

- Parameter Bounds: Enforce hard constraints during the EA's mutation and crossover steps to keep all parameters (e.g., ion channel conductances) within physiologically realistic ranges [1].

- Soft Constraints via Penalty: Add a penalty term to the fitness score ( F ) if the model exhibits biologically impossible behavior, even if the feature match is good (e.g., negative membrane time constants or continuous spiking at rest).

Protocol: Validation and Generalization Testing

A fitted model must be validated to ensure it is not overfitted to the specific stimuli used for optimization and that it generalizes well [26].

- Benchmarking Performance: Compare the performance of your EA implementation against established benchmarks. This includes assessing strong scaling (fixed problem size with increasing computing resources) and weak scaling (problem size grows proportionally with resources) to evaluate computational efficiency [23].

- Validation with Unseen Stimuli: Test the optimized model against a completely different set of stimulation protocols (e.g., noisy current injections or ramp currents) that were not used during the fitting process. This assesses the model's ability to generalize beyond its training data.

- Population Generalization: To build a population of models (PoMs) that captures biological variability, use the same fitness function to optimize models against multiple experimental traces from different neurons. Assess the generalizability of the resulting population by testing how well it captures the statistical distribution of features in the biological population [27] [26]. A recent study demonstrated a 5-fold improvement in model generalizability using an automated workflow that includes rigorous validation [26].

The Scientist's Toolkit: Research Reagents and Solutions

Table 2: Essential Tools for EA-based Neuron Model Fitting

| Tool / Resource | Function | Application Note |

|---|---|---|

| BluePyOpt [23] [26] | A Python library for parameter optimization that implements various EAs (e.g., IBEA). | Provides the core optimization engine for defining parameters, fitness functions, and running the EA. |

| NEURON Simulator [23] [28] | A widely used environment for simulating biophysical neuron models. | Integrated with BluePyOpt to simulate the electrical activity of candidate models during fitness evaluation. |

| Arbor Simulator [26] | A high-performance, GPU-ready simulator for large-scale neural networks. | An alternative to NEURON, useful for accelerating simulations in computationally expensive optimizations. |

| BluePyEfel [26] | A Python library for extracting electrophysiological features from voltage traces. | Automates the calculation of features from both experimental and simulated data for fitness scoring. |

| Allen Brain Cell Types Database | A public repository containing electrophysiological recordings and neuronal morphologies. | A source of experimental data for defining target features for optimization, especially for cortical neurons. |

| DEAP Framework [23] | A general-purpose Evolutionary Computation framework in Python. | Can be used to build custom EAs if a pre-packaged solution like BluePyOpt is insufficient. |

Advanced Methodologies and Future Directions

As the field advances, fitness function design is incorporating more sophisticated metrics and leveraging machine learning. Efficacy metrics for scoring phenotypic recovery in disease models are being developed. For instance, studies now use the Wasserstein distance (Earth Mover's Distance) to quantify how well a virtual drug moves a diseased neuronal population's electrophysiological profile closer to a healthy state, going beyond simple mean comparisons to account for the full distribution of features [27]. Furthermore, deep generative models like ElectroPhysiomeGAN (EP-GAN) represent a paradigm shift. This approach uses a generative adversarial network to instantly map electrophysiological recordings to a full set of Hodgkin-Huxley model parameters, effectively learning a highly complex, implicit fitness landscape that can generalize across multiple neurons [1]. Finally, methods like the oracle-supervised Neural Engineering Framework (osNEF) demonstrate that functional models can be constructed from highly detailed neuron components. This approach treats the neuron as a black box, using a learning rule that relies on spiking inputs and outputs to train the network, thus bypassing the need for a manually defined, feature-based fitness function for certain cognitive tasks [28].

Evolutionary Algorithms (EAs) have emerged as a powerful, gradient-free alternative for optimizing complex neural models, particularly where traditional methods like backpropagation face challenges of instability and biological implausibility [29]. Their effectiveness, however, is critically dependent on the careful initialization and parameterization of core components: population size, mutation rates, and selection strategies. This document provides detailed application notes and protocols for configuring these elements, specifically tailored for researchers engaged in fitting biophysically inspired neuron models.

Core Parameters and Quantitative Guidelines

The performance of an EA hinges on a balance between exploration (searching new areas of the solution space) and exploitation (refining known good solutions). The table below summarizes established and empirically validated parameter ranges for problems typical in computational neuroscience, such as optimizing neural architecture search or neuron model parameters [30] [31].

Table 1: Guidelines for Core EA Parameters in Neuron Model Fitting

| Parameter | Recommended Range | Use Case & Rationale | Supporting Evidence |

|---|---|---|---|

| Population Size | 20 - 100 (Small problems)100 - 1000 (Complex problems) | Smaller for simple models or few parameters; larger for high-dimensional optimization (e.g., multi-compartment models) to maintain diversity [31]. | Baseline methods outperformed by algorithms using populations within these ranges [30] [32]. |

| Mutation Rate | 0.001 - 0.11 / chromosome_length | Low rates prevent disruption of good solutions; higher rates promote exploration. The inverse of chromosome length is a common heuristic [31]. | Guided mutation strategies are a key component of state-of-the-art evolutionary NAS [30]. |

| Crossover Rate | 0.6 - 0.9 | Balances mixing of parental genetic material with the need to preserve existing solutions. Higher rates are typically beneficial [31]. | Standard parameter in GA frameworks used for complex optimization [32] [33]. |

| Selection Strategy | Tournament Selection (size 3-5)Elitism (1-5%) | Tournament selection offers controllable selection pressure. Elitism ensures top-performing solutions are preserved across generations [31]. | Greedy selection based on fitness is a successful exploitative strategy in evolutionary NAS [30]. |

| Termination Condition | maxGenerations = 1000stagnantGenerationsLimit = 50-100 |

Stops the algorithm after a set number of generations or if no fitness improvement occurs for a predefined number of generations [31]. | Adaptive methods trigger changes (e.g., increased mutation) after periods of stagnation [31] [33]. |

Experimental Protocols for EA Parameterization

Protocol: Baseline Parameter Tuning for Neuron Model Fitting

This protocol establishes a robust starting configuration for EAs applied to problems like fitting a neuron model's parameters to match electrophysiological data or a target bifurcation structure [34].

- Initialization: Generate an initial population with high diversity. For real-valued parameters, use a uniform distribution across the plausible biophysical range (e.g., conductance densities). For architectural choices, ensure a random sampling of possible configurations [35].

- Parameter Setting: Adopt the following baseline parameters:

- Population Size:

100 - Mutation Rate:

0.05 - Crossover Rate:

0.8 - Selection: Tournament selection with size

3and elitism preserving the top2individuals.

- Population Size:

- Execution and Monitoring: Run the EA with a fixed random seed for reproducibility. Track the best fitness and population diversity (e.g., average Hamming or Euclidean distance between individuals) over generations.

- Iterative Refinement: Change one parameter at a time from the baseline. For instance, if convergence is premature, incrementally increase the mutation rate or population size. Use benchmarking tools to compare performance objectively [31].

Protocol: Implementing Adaptive Mutation for Stagnation Avoidance

Static parameters can lead to stagnation. This protocol outlines a dynamic method to adjust the mutation rate based on population fitness, inspired by strategies like the Dynamically Adjusted Mutation Operator (DGEP-M) [33].

- Define Stagnation: Set a threshold for generations without improvement (e.g.,

N = 50). - Monitor Fitness: During execution, track the best fitness value in each generation.

- Adjust Mutation: Implement a rule to modify the mutation rate (

mut_rate) when stagnation is detected:- If

generations_without_improvement > N, thenmut_rate = mut_rate * 1.5[31]. - Cap the mutation rate at a maximum value (e.g.,

0.3) to prevent completely random search.

- If

- Reset Counter: Reset the

generations_without_improvementcounter whenever a new best fitness is found.

Protocol: Guided Mutation for Enhanced Exploration

For complex search spaces, such as optimizing neural architectures, a guided mutation strategy can be more effective than random mutation. The following protocol is based on the Population-Based Guiding (PBG) approach [30].

- Population Encoding: Represent each individual in the population (e.g., a neural architecture) using a categorical one-hot encoding, resulting in a binary vector for each.

- Calculate Probability Vectors:

- Sum the binary vectors across the entire population and average them to create a

probs1vector, which indicates the prevalence of '1's at each gene position. - Compute

probs0 = 1 - probs1, which indicates the prevalence of '0's.

- Sum the binary vectors across the entire population and average them to create a

- Sample Mutation Indices: To encourage exploration of under-represented features, sample the locations for mutation from the

probs0distribution. This steers new mutations toward genetic material not present in the current population. - Apply Mutation: For each selected index in an individual, flip the value (from 0 to 1 or 1 to 0) while maintaining the validity of the solution [30].

Workflow and Strategy Visualization

The following diagram illustrates the logical workflow for initializing and running an EA, incorporating the adaptive and guided strategies outlined in the protocols.

EA Initialization and Execution Workflow

The relationship between the key EA strategies and their primary objectives in balancing exploration and exploitation is summarized in the following diagram.

EA Strategies for Exploration and Exploitation

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for EA-based Neuron Model Fitting

| Tool / Component | Function | Application Example |

|---|---|---|

| GRADE Methodology | Provides a structured, transparent framework for assessing evidence and strength of recommendations, improving guideline reliability [36]. | Informing the design of EA benchmarking experiments and the evaluation of fitted model quality. |

| Radial Basis Function (RBF) Surrogate Model | A surrogate model that approximates the expensive true fitness function, drastically reducing computational cost [35]. | Accelerating the evaluation of candidate neuron models by predicting their fitness based on a subset of full simulations. |

| Quadratic Integrate-and-Fire (QIF) Neuron Model | A simplified phenomenological neuron model that can be fitted to capture the bifurcation structure of complex, conductance-based models [34]. | Serving as a fast, efficient target for EA parameterization, enabling the study of network dynamics influenced by ion concentration. |

| Population-Based Guiding (PBG) | An algorithmic framework that uses the current population's genetic distribution to guide mutations toward unexplored regions of the search space [30]. | Enhancing the exploration phase when optimizing neural architectures or high-dimensional parameter sets for detailed neuron models. |

| Dynamic Gene Expression Programming (DGEP) | An algorithm that introduces adaptive genetic operators to maintain population diversity and prevent premature convergence [33]. | Solving complex symbolic regression problems that may arise in modeling neuronal input-output relationships or network connectivity rules. |