A Modular Workflow for Performance Benchmarking of Neuronal Network Simulations

This article explores the critical role of standardized, modular benchmarking workflows in advancing computational neuroscience and drug discovery.

A Modular Workflow for Performance Benchmarking of Neuronal Network Simulations

Abstract

This article explores the critical role of standardized, modular benchmarking workflows in advancing computational neuroscience and drug discovery. It addresses the challenges of comparing simulator performance across diverse hardware, software, and model configurations. The content provides a foundational understanding of benchmarking principles, details the implementation of a modular workflow, offers strategies for troubleshooting and optimization, and establishes a framework for validation and comparative analysis. Aimed at researchers and drug development professionals, this guide serves as a comprehensive resource for improving the efficiency, reproducibility, and scalability of neuronal network simulations to accelerate therapeutic development.

The Critical Need for Standardized Benchmarking in Neuroscience

Performance benchmarking of neuronal networks has emerged as a critical methodology in computational neuroscience, enabling rigorous comparison of simulation technologies and guiding their development toward greater efficiency and capability. As the field progresses toward simulating brain-scale networks with increasing biological detail, the challenges of achieving accurate, reproducible, and comparable benchmark results have necessitated more structured approaches [1]. The complexity of modern neuronal network simulations spans multiple dimensions, including hardware configurations, software versions, simulator implementations, network models with their specific parameters, and researcher communication practices [1]. This landscape has motivated the development of standardized benchmarking workflows that can systematically address these dimensions while maintaining scientific relevance and practical utility. Performance benchmarking serves not only to validate simulation technologies but also to identify performance bottlenecks, guide optimization efforts, and ensure that computational resources are utilized effectively in the pursuit of neuroscientific discovery [1].

Conceptual Framework for Benchmarking

A systematic approach to neuronal network benchmarking employs a modular workflow that decomposes the process into distinct, interoperable segments. This conceptual framework encompasses several core components that work in concert to produce reliable, reproducible benchmark results. The hardware configuration module specifies the computing infrastructure, including processor architectures, memory hierarchies, interconnect technologies, and specialized neuromorphic systems where applicable [1]. The software configuration module encompasses operating systems, compiler versions, numerical libraries, and simulator-specific compilation options that significantly impact performance [1]. The simulator selection module encompasses the diverse range of simulation technologies available, from CPU-based simulators like NEST and Brian to GPU-accelerated platforms like GeNN and NeuronGPU, along with neuromorphic systems such as SpiNNaker and BrainScaleS [1] [2].

The model specification module defines the neuronal networks used for benchmarking, including their anatomical structure, neuronal and synaptic models, and the dynamics they exhibit. Finally, the data collection and analysis module standardizes how performance metrics are measured, recorded, and interpreted, ensuring comparability across different benchmark executions [1]. This modular decomposition enables researchers to systematically vary parameters within each component while maintaining consistency in others, facilitating precise identification of performance factors and their interactions. The framework's flexibility allows it to accommodate both functional models (validated by their ability to perform specific tasks) and non-functional models (validated through analysis of network structure and dynamics) [1].

Experimental Models for Benchmarking

Model Specifications and Characteristics

Performance benchmarking relies on well-characterized neuronal network models that represent scientifically relevant challenges while being sufficiently standardized to enable fair comparisons across simulators and hardware. These models vary in complexity, dynamics, and computational demands, providing a spectrum of benchmark scenarios that test different aspects of simulation technology.

Table 1: Benchmark Neuronal Network Models

| Model Name | Network Structure | Neuron Model | Synapse Model | Key Characteristics | Primary Applications |

|---|---|---|---|---|---|

| Balanced Random Network | Two-population (80% excitatory, 20% inhibitory) | Leaky Integrate-and-Fire (LIF) | Alpha-shaped postsynaptic currents, STDP | Excitation-inhibition balance, asynchronous irregular activity | Strong and weak scaling studies, simulator performance evaluation [1] |

| Brunel-type Network | Multiple populations with random connectivity | Leaky Integrate-and-Fire | Current-based or conductance-based | Configurable dynamics (synchronous/asynchronous states) | Simulation technology validation, performance analysis [1] |

| Multi-area Model | Hierarchical connectivity between brain areas | Various point neuron models | Short-term plasticity, NMDA synapses | Biological connectivity data, metastable dynamics | Memory consumption analysis, communication patterns [1] |

| Morphologically Detailed Networks | Sparse connectivity with spatial constraints | Multi-compartment neurons | conductance-based synapses | Complex dendritic processing, structural realism | Memory bandwidth tests, load balancing evaluation [1] |

| Synthetic Feature Selection Datasets | Custom connectivity for specific patterns | Simplified binary units | Deterministic connections | Ground truth knowledge, nonlinear relationships | Feature selection method validation, interpretability analysis [3] |

The balanced random network, particularly the "HPC-benchmark model" used in NEST development, represents a cornerstone benchmark in the field. This model typically employs leaky integrate-and-fire neurons with alpha-shaped post-synaptic currents and spike-timing-dependent plasticity (STDP) between excitatory neurons [1]. Its popularity stems from the approximately balanced excitation and inhibition observed in cortical networks, generating asynchronous irregular spiking activity that presents a computationally challenging scenario, particularly for distributed simulations requiring extensive communication.

For specialized benchmarking scenarios, synthetic datasets with precisely controlled properties provide valuable ground truth for evaluating specific capabilities. The RING dataset presents circular, non-linear decision boundaries that are impossible for linear additive models to capture, challenging simulators to accurately reproduce these dynamics [3]. The XOR dataset implements the archetypal non-linearly separable problem, requiring models to capture synergistic relationships between input features since individual features are uninformative [3]. Combined datasets such as RING+XOR merge these challenges, increasing the number of relevant features and preventing unfair advantage to methods that consider only small feature sets [3].

Spiking Neural Network Learning Methods

For spiking neural networks (SNNs), benchmarking extends beyond simulation performance to include learning capabilities and robustness. SNNs process information through discrete spikes, operating at significantly lower energy levels than traditional computing architectures, but training them presents unique challenges due to the non-differentiable nature of spiking mechanisms [4].

Table 2: Spiking Neural Network Learning Methods

| Method | Locality | Biological Plausibility | Computational Efficiency | Key Characteristics | Performance Considerations |

|---|---|---|---|---|---|

| Backpropagation Through Time (BPTT) | Global | Low | Memory-intensive, computationally expensive | Unrolls neural dynamics over time, symmetric weights | High accuracy but biologically implausible [4] |

| Feedback Alignment | Global | Medium | Moderate efficiency | Random matrices for backward passes, no symmetric weights | Reduced need for symmetric weights during learning [4] |

| E-prop | Semi-global | Medium-high | Improved efficiency | Direct feedback alignment, error propagated directly to each layer | Higher biological plausibility while maintaining performance [4] |

| DECOLLE | Local | High | High efficiency | Local error propagation at each layer, random mapping to pseudo-targets | Fully local learning, highest biological plausibility [4] |

The benchmarking of SNN learning methods must consider the trade-off between biological plausibility and performance. Global methods like BPTT typically achieve higher accuracy but at the cost of biological realism and computational efficiency, while local methods like DECOLLE offer greater biological plausibility and efficiency but may sacrifice some performance [4]. Additionally, the inherently recurrent nature of SNNs presents opportunities for enhancing robustness through explicit recurrent connections, which has been shown to improve resistance to adversarial attacks [4].

Performance Metrics and Evaluation

Core Benchmarking Metrics

Comprehensive benchmarking of neuronal networks requires multiple metrics that capture different aspects of performance, from raw speed to energy efficiency and simulation accuracy. These metrics provide complementary insights into simulator capabilities and limitations.

Time-to-solution measures the wall-clock time required to complete a simulation, typically distinguishing between network construction (setup phase) and state propagation (simulation phase) [1]. For performance analysis, it's crucial to specify whether benchmarks employ strong scaling (fixed model size with increasing resources) or weak scaling (model size grows proportionally with resources) approaches, as each reveals different performance characteristics [1].

Energy-to-solution quantifies the total energy consumption required to complete a simulation, an increasingly important metric as computational neuroscience addresses larger and more complex models [1]. Measurements may include only compute node consumption or encompass interconnects and support hardware, requiring clear specification for proper interpretation [1].

Memory consumption tracks peak memory usage during simulation execution, which can become a limiting factor for large-scale models with detailed neuronal morphologies or complex synaptic plasticity rules [1].

Simulation accuracy evaluates how closely simulated activity matches expected results, typically assessed through statistical comparisons of firing rates, distributions of membrane potentials, or correlation measures rather than exact spike timing due to the chaotic nature of neuronal network dynamics [1].

Scalability measures how simulation performance changes with increasing computational resources or model size, typically presented as speedup curves or efficiency plots that reveal performance bottlenecks and optimal resource configurations [1].

Quantitative Benchmark Results

Rigorous benchmarking studies have revealed significant performance differences across simulation technologies and configurations. Recent evaluations of deep learning-based feature selection methods on synthetic datasets demonstrate that even simple datasets can challenge many DL-based approaches, while traditional methods like Random Forests, TreeShap, mRMR, and LassoNet often show superior performance in identifying non-linear relationships [3].

For spiking neural network simulations, benchmarks comparing learning methods with varying locality reveal important trade-offs. BPTT generally achieves higher accuracy on classification tasks but with substantial computational and memory costs, while local learning methods like DECOLLE offer greater biological plausibility and efficiency but may exhibit accuracy degradation on complex tasks [4]. The addition of explicit recurrent weights in SNNs has been shown to enhance robustness against both gradient-based and non-gradient adversarial attacks, with Centered Kernel Alignment (CKA) metrics demonstrating greater representational stability in recurrent architectures under attack scenarios [4].

Experimental Protocols

Protocol for Balanced Random Network Benchmark

Purpose: To measure simulation performance for a canonical cortical network model with balanced excitation and inhibition, generating asynchronous irregular spiking activity.

Materials:

- Simulator installation (NEST, NEURON, Brian, GeNN, or Arbor)

- High-performance computing resources with appropriate core counts

- Performance monitoring tools (e.g., custom timing scripts, profiling utilities)

Procedure:

- Network Construction:

- Implement a two-population network with 80% excitatory and 20% inhibitory neurons [1]

- Set membrane parameters: Cm = 250 pF, taum = 20 ms for excitatory neurons; Cm = 250 pF, taum = 10 ms for inhibitory neurons [1]

- Configure synaptic weights: wexc = 0.1 mV (excitatory), winh = -0.5 mV (inhibitory) [1]

- Implement connectivity with 10% connection probability between neurons [1]

Simulation Configuration:

- Set simulation duration to 10,000 biological seconds with a timestep of 0.1 ms

- Configure recording of spike times from 1% of randomly selected neurons

- Implement Poisson background input with rate of 10 Hz per neuron

Performance Measurement:

- Execute strong scaling tests with fixed network size (e.g., 100,000 neurons) while varying core counts (1, 2, 4, 8, 16, 32, 64 cores)

- Execute weak scaling tests with network size proportional to core count

- Record separate timings for network construction and simulation phases

- Measure peak memory usage throughout simulation

Data Analysis:

- Calculate firing rates and coefficient of variation for interspike intervals to verify network state

- Compute speedup and parallel efficiency from timing data

- Generate performance plots comparing time-to-solution across configurations

Validation: Verify balanced state with mean firing rates of approximately 5-10 Hz and coefficient of variation of interspike intervals greater than 1 [1].

Protocol for Feature Selection Benchmarking

Purpose: To evaluate the performance of feature selection methods on non-linearly separable synthetic datasets with known ground truth.

Materials:

- Synthetic dataset generation code (RING, XOR, RING+XOR+SUM, DAG)

- Feature selection implementations (Random Forests, TreeShap, mRMR, LassoNet, DL-based methods)

- Computing environment with sufficient memory for high-dimensional data

Procedure:

- Dataset Generation:

- Generate RING dataset with n=1000 observations, m=p+k features (p=2 predictive, k variable decoys)

- Implement classification rule: Y=1 when |√((X₀-0.5)² + (X₁-0.5)²) - 0.35| ≤ 0.1151 [3]

- Generate XOR dataset with division of 2D space into 4 quadrants, positives in upper left and lower right

- Create combined datasets (RING+OR, RING+XOR+SUM) with increased predictive feature counts [3]

Method Evaluation:

- Apply each feature selection method to all dataset variants

- Systematically vary the number of decoy features (k) to assess robustness

- For DL-based methods, implement standard architectures with appropriate regularization

- For gradient-based attribution methods, compute Saliency Maps, Integrated Gradients, and SmoothGrad

Performance Assessment:

- Calculate precision and recall for identification of truly predictive features

- Measure computation time for each method

- Assess stability of selected features across multiple dataset instances

Validation: Compare results to known ground truth, with optimal methods correctly identifying predictive features despite non-linear relationships and increasing decoy dimensions [3].

Protocol for SNN Learning Method Benchmarking

Purpose: To compare the performance, efficiency, and robustness of spiking neural network learning methods with varying degrees of locality.

Materials:

- SNN simulator with support for multiple learning rules (BPTT, e-prop, DECOLLE)

- Neuromorphic datasets (N-MNIST, DVS Gesture) and traditional datasets (MNIST, CIFAR-10)

- Computational resources for training and evaluation

Procedure:

- Network Configuration:

- Implement Leaky Integrate-and-Fire (LIF) neurons with membrane and synaptic time constants [4]

- Configure network architecture with 800 excitatory and 200 inhibitory neurons

- For explicit recurrence, add recurrent connections with 20% probability

Training Protocol:

- Implement BPTT with surrogate gradient functions to handle non-differentiable spiking

- Configure e-prop with direct feedback alignment and symmetric weights

- Set up DECOLLE with local errors and random feedback matrices

- Train on classification tasks with matched hyperparameters where possible

Evaluation:

- Measure test accuracy on held-out datasets

- Record training time and memory consumption

- Assess robustness against gradient-based (FGSM, PGD) and non-gradient poisoning attacks

- Compute Centered Kernel Alignment (CKA) to analyze representational similarity

Validation: Verify that each learning method produces stable network activity and reasonable accuracy, with global methods typically outperforming local methods on accuracy but requiring more computational resources [4].

The Scientist's Toolkit

Research Reagent Solutions

Successful benchmarking of neuronal networks requires a comprehensive set of software tools, hardware platforms, and methodological approaches. The following table details essential components of the benchmarking toolkit.

Table 3: Essential Research Reagents for Neuronal Network Benchmarking

| Category | Tool/Platform | Primary Function | Key Features | Application Context |

|---|---|---|---|---|

| Simulation Engines | NEST | Simulate spiking neural network models | Focus on neural system dynamics and structure, ideal for networks of any size | Information processing models, network activity dynamics, learning and plasticity [2] |

| NEURON | Simulate morphologically detailed neurons | Multi-compartment models, complex electrophysiology | Detailed neuronal modeling, biophysically realistic simulations [1] | |

| Brian | Simulate spiking neural networks | Flexible model specification, clear code structure | Prototyping, teaching, research with custom neuron models [1] | |

| GeNN | GPU-accelerated neural network simulations | Code generation for GPU acceleration, support for various neuron models | Large-scale simulations requiring GPU acceleration [1] | |

| Benchmarking Frameworks | beNNch | Configuration, execution, and analysis of benchmarks | Records benchmarking data and metadata uniformly, supports reproducibility | Standardized benchmarking across simulators and hardware [1] |

| QuantBench | Evaluation of AI methods in quantitative investment | Industrial-grade standardization, full-pipeline coverage | Financial applications, standardized evaluation [5] | |

| Model Specification | PyNN | Simulator-independent network description | Write once, run on multiple simulators, high-level abstraction | Multi-simulator studies, model sharing, reproducible research [2] |

| NESTML | Domain-specific language for neuron models | Precise and concise syntax, automatic code generation | Defining custom neuron models, maintaining model consistency [2] | |

| Hardware Platforms | SpiNNaker | Neuromorphic computing platform | Massively parallel architecture, low power consumption | Real-time simulation, neuromorphic applications [2] |

| BrainScaleS | Neuromorphic system with analog neurons | Physical emulation of neural dynamics, high speed | Fast simulation, analog neuromorphic computing [2] | |

| Analysis & Visualization | NEST Desktop | Web-based GUI for NEST Simulator | Visual network construction, parametrization, result visualization | Education, prototyping, visual analysis of networks [2] |

Integrated Benchmarking Workflow

A modular workflow for performance benchmarking integrates these components into a systematic process that ensures reproducibility and meaningful comparisons. The reference implementation beNNch demonstrates this approach by decomposing benchmarking into distinct modules for configuration, execution, and analysis [1]. The workflow begins with precise specification of the benchmarking objectives, which determines the appropriate selection of network models, performance metrics, and experimental conditions. The model configuration module then instantiates the chosen network models with all relevant parameters, ensuring consistency across different simulator platforms through standardized descriptions, potentially using PyNN for simulator-independent definitions [2].

The execution environment module configures the hardware and software stack, capturing essential metadata such as compiler versions, library dependencies, and system architecture that might influence performance [1]. During benchmark execution, the workflow employs standardized timing and measurement procedures, clearly distinguishing between network construction time and simulation time to identify potential bottlenecks [1]. The data collection module records both performance metrics and simulation outputs, enabling subsequent verification that the models produced scientifically valid results in addition to performance measurements [1].

Finally, the analysis module processes the raw data to generate comparative performance metrics, scaling plots, and efficiency analyses, while the reporting module formats results in standardized formats suitable for publication and archival [1]. Throughout this process, version control for both model specifications and benchmarking code ensures reproducibility, while containerization technologies can capture the complete software environment to enable replication of results across different systems [1]. This integrated approach addresses the critical challenge of maintaining comparability in a rapidly evolving field with diverse simulation technologies, hardware platforms, and model complexities.

Reproducibility and comparability form the cornerstone of the scientific method, yet they present significant challenges in the field of simulation science, particularly in computational neuroscience. The inability to replicate or reproduce published research results has emerged as one of the most pressing issues across scientific disciplines [6]. In computational neuroscience specifically, where thousands of models are available, it is rarely possible to reimplement models based on information in original publications, primarily because model implementations are not made publicly available [6]. This challenge impedes scientific progress and undermines the reliability of computational models intended to explain brain dynamics in health and disease.

The development of complex neuronal network models proceeds alongside advancements in network theory and increasing availability of detailed anatomical data on brain connectivity [7]. As models grow in scale and complexity to study interactions between multiple brain areas and long-time scale phenomena such as system-level learning, ensuring reproducibility and comparability becomes both more critical and more challenging. This article examines these challenges within the context of developing modular workflows for performance benchmarking of neuronal network simulations, providing researchers with frameworks and protocols to enhance the reliability of their computational studies.

Defining the Landscape: Reproducibility and Replicability

Terminology and Conceptual Framework

The terminology surrounding reproducibility varies across disciplines, but several key definitions provide a conceptual framework for discussion:

Replicability refers to rerunning the publicly available code developed by the authors of a study and replicating the original results, achieving fully identical results including spike times and all state variables in neuronal network simulations [6] [8].

Reproducibility means reimplementing a model using knowledge from the original study, often in a different simulation tool or programming language, and simulating it to verify the study's results, focusing on key findings rather than identical outputs [6] [8].

Comparability involves comparing simulation results of different tools when the same model has been implemented in them, or comparing results of different models addressing similar research questions [6].

Robustness to analytical variability refers to the ability to identify a finding consistently across variations in methods, using the same data but different analytical approaches [9].

Table 1: Types of Reproducibility Based on Variable Experimental Components

| Type | Data Source | Method | Team/Lab | Objective |

|---|---|---|---|---|

| Type A (Analytical) | Same data | Same methods | Any | Confirm original analysis [10] |

| Type B (Robustness) | Same data | Different methods | Any | Assess sensitivity to analytical choices [10] |

| Type C (Intra-lab) | New data | Same methods | Same team | Verify internal consistency [10] |

| Type D (Inter-lab) | New data | Same methods | Different team | Confirm external validity [10] |

| Type E (Generalizability) | New data | Different methods | Different team | Establish broad applicability [10] |

The Inverse Relationship Between Reproducibility and Replicability

In computational neuroscience, a fundamental tension exists between replicability and reproducibility. A turnkey system provided on dedicated hardware or a virtual machine will run identically every time (high replicability) but may not be reproducible by outsiders who cannot access or modify the system [8]. Conversely, representations using equations provide the greatest degree of reproducibility across research groups but make obtaining identical results less likely [8]. This inverse relationship necessitates careful consideration of research goals when evaluating computational studies.

Fundamental Challenges in Simulation Science

Documentation and Implementation Gaps

The most fundamental challenge is the unavailability of original model implementations, with published articles often providing incomplete information due to accidental mistakes or limited publication space [6]. When implementations are shared, insufficient documentation regarding parameters, initial conditions, or computational environment creates significant barriers to replication.

Tool and Format Diversity

Computational neuroscience employs a diverse ecosystem of simulation tools, each with specialized capabilities:

- Brian and NEST for spiking neuronal networks using CPUs [6] [1]

- GeNN and NeuronGPU for GPU-accelerated simulations [1]

- NEURON and Arbor for morphologically detailed neuronal networks [1]

- COPASI and STEPS for biochemical reactions and signaling pathways [6] [11]

This tool diversity, while beneficial for addressing different research questions, creates interoperability challenges as each tool may use different model definition formats (SBML, NeuroML, custom formats), algorithms, number resolutions, or random number generators [11].

Systemic and Cultural Barriers

Cultural practices in scientific publishing often prioritize novelty over replication studies, with few journals explicitly accepting reproducibility studies [6]. Additionally, the lack of standardized specifications for measuring scaling performance on high-performance computing (HPC) systems complicates comparison across studies [7]. Research assessments that prioritize novel findings over replication efforts further disincentivize the substantial effort required for reproducibility studies.

The Modular Workflow Solution: beNNch Framework

Conceptual Framework for Benchmarking

Addressing the challenges of reproducibility and comparability in neuronal network simulations requires systematic approaches. The beNNch framework implements a modular workflow that decomposes the benchmarking process into distinct segments, providing a standardized methodology for performance assessment [7] [12]. This framework addresses five key dimensions of benchmarking complexity:

- Hardware configuration: Computing architectures and machine specifications

- Software configuration: General software environments and instructions

- Simulators: Specific simulation technologies

- Models and parameters: Different models and their configurations

- Researcher communication: Knowledge exchange on running benchmarks [7]

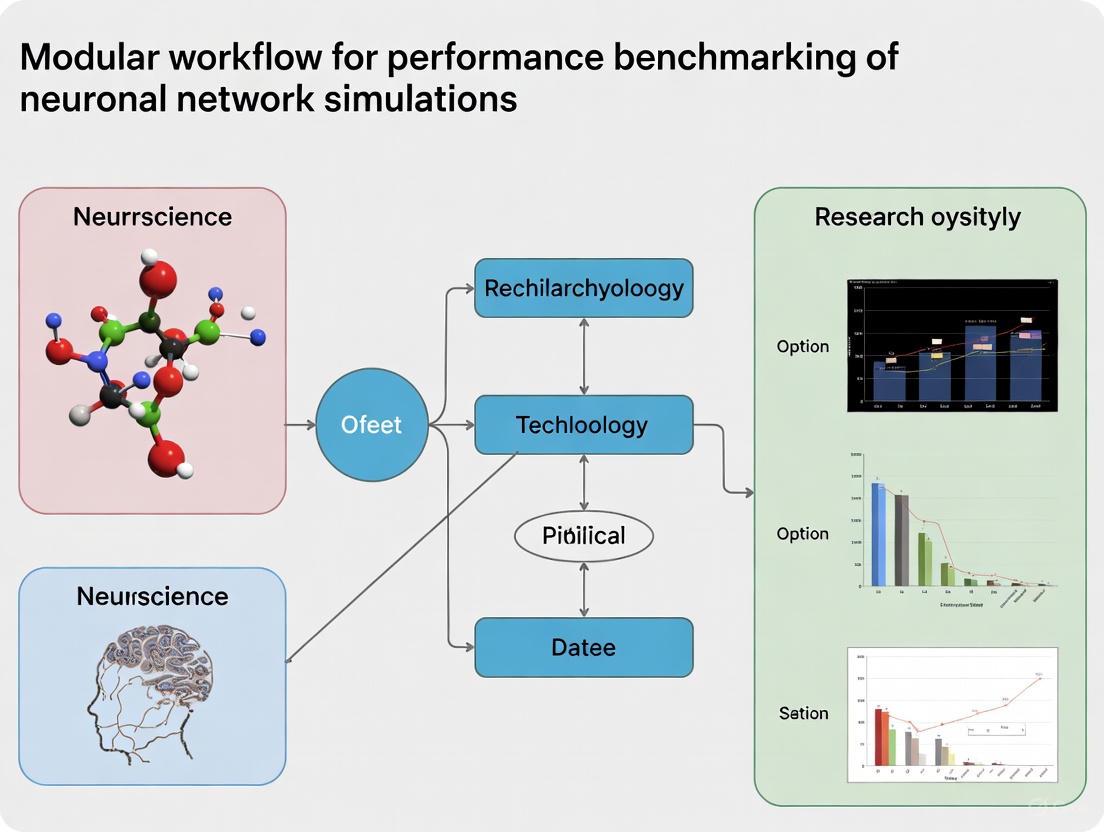

Figure 1: Modular workflow for benchmarking neuronal network simulations, ensuring reproducible performance assessments [7].

Workflow Implementation

The beNNch framework operationalizes the benchmarking process through five interconnected modules:

- Configuration Module: Defines benchmark experiments, including model parameters, simulator settings, and hardware resources

- Execution Module: Runs simulations across specified hardware and software configurations

- Analysis Module: Processes simulation output to extract performance metrics

- Storage Module: Records benchmarking data and metadata in a unified format

- Comparison Module: Enables cross-platform and cross-version performance analysis [7]

This modular approach ensures that benchmarking studies capture all necessary information to foster reproducibility, including detailed records of hardware and software configurations that are often omitted in conventional publications.

Experimental Protocols for Benchmarking

Strong and Weak Scaling Experiments

Performance benchmarking of simulation engines typically employs two complementary approaches:

Weak-scaling experiments proportionally increase the size of the simulated network model with computational resources, maintaining a fixed workload per compute node in perfectly scaling systems [1]. However, scaling neuronal networks inevitably changes network dynamics, complicating interpretation of results [1].

Strong-scaling experiments maintain a constant model size while increasing computational resources, which is more relevant for finding the limiting time-to-solution for network models of natural size [1]. When measuring time-to-solution, studies distinguish between setup phase (network construction) and simulation phase (state propagation) [1].

Table 2: Key Parameters for Balanced Random Network Benchmark Model

| Parameter Category | Specific Parameters | Typical Values | Function in Benchmarking |

|---|---|---|---|

| Network Architecture | Population ratio (E/I) | 80%/20% | Mimics cortical microcircuitry [1] |

| Neuron Model | Leaky integrate-and-fire (LIF) | Membrane time constant: 20ms | Computational efficiency for large networks [1] |

| Synapse Model | Alpha-shaped postsynaptic currents | Rise/decay time constants | Biologically plausible temporal dynamics [1] |

| Plasticity | Spike-timing-dependent plasticity (STDP) | Timing-dependent weight changes | Introduces computational complexity [1] |

| Connectivity | Balanced random connectivity | Specific synaptic weights | Maintains excitation-inhibition balance [1] |

Verification and Validation Protocols

To ensure credible simulations, particularly for biomedical applications, rigorous verification and validation protocols are essential:

Verification confirms that the implementation returns correct results with sufficient accuracy for suitable applications, evidenced by flawless implementation of components confirmed by unit tests [1].

Validation provides evidence that results are computed efficiently and address the intended research questions, comparing new technologies to previous studies based on relevant performance measures [1].

For clinical applications, establishing credibility is paramount. As computational neuroscience moves toward clinical applications, validation must demonstrate not just technical correctness but also clinical relevance and predictive power [8].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Research Reagent Solutions for Reproducible Simulation Science

| Tool/Category | Specific Examples | Primary Function | Interoperability Considerations |

|---|---|---|---|

| Simulation Engines | NEST, Brian, NEURON, Arbor, GeNN | Simulate neuronal networks at different scales | Different model description formats; partial SBML/NeuroML support [1] [11] |

| Model Description Formats | SBML, NeuroML, CellML, SBtab | Standardized model representation | Conversion tools needed (SBFC, VFGEN) [11] |

| Parameter Estimation | MCMCSTAT (MATLAB), pyABC/pyPESTO (Python) | Estimate model parameters from data | Different algorithmic implementations and file formats [11] |

| Sensitivity Analysis | Uncertainpy (Python) | Global sensitivity analysis | Dependency on specific programming languages [11] |

| Benchmarking Frameworks | beNNch | Standardized performance assessment | Records data and metadata for reproducibility [7] |

| Version Control | Git, GitHub | Track changes and collaborate | Universal format, but requires discipline in usage [9] |

Figure 2: Interoperability workflow for biochemical models using format conversion to enable multi-simulator validation [11].

Protocols for Enhanced Reproducibility

Model Documentation and Sharing Protocol

Comprehensive model documentation should include:

- Mathematical specifications: Complete equations, parameters, and initial conditions in tabular format [6]

- Implementation code: Well-commented, version-controlled code with dependency specifications

- Simulation protocols: Step-by-step procedures for reproducing simulations

- Data sharing: Original data and scripts for analysis in standardized formats

The use of human-readable formats like SBtab facilitates manual editing and inspection while enabling automated conversion to machine-readable formats like SBML [11].

Metadata Recording for Benchmarking Studies

Consistent recording of metadata is essential for reproducing benchmarking results:

- Hardware specifications: Compute nodes, processors, memory, interconnect details

- Software environment: Operating system, compiler versions, library dependencies

- Simulator configuration: All settings and flags used during simulation

- Performance metrics: Time-to-solution, memory consumption, energy usage where possible

Frameworks like beNNch provide structured formats for capturing this metadata systematically [7].

Reproducibility and comparability challenges in simulation science require multifaceted solutions addressing technical, methodological, and cultural dimensions. Modular workflows for benchmarking, such as the beNNch framework, provide structured approaches to assess simulation performance while capturing essential metadata for reproducibility. Standardized protocols for model documentation, format conversion, and performance measurement enable more reliable building upon existing work in computational neuroscience.

As the field progresses toward more complex multiscale models and clinical applications, the adoption of these practices and tools will be essential for constructing a solid foundation of reproducible, replicable, and robust computational research. The development and widespread adoption of community standards, coupled with cultural shifts that value reproducibility as much as novelty, will drive future advances in simulation science.

In computational neuroscience, the development of complex neuronal network models to explain brain dynamics in health and disease necessitates advancements in simulation technology. Progress in simulation speed enables larger models that study interactions between multiple brain areas and investigate long-term phenomena like system-level learning [7]. The development of state-of-the-art simulation engines relies critically on benchmark simulations that assess performance metrics across various combinations of hardware and software configurations [7] [1]. This application note details the core dimensions of benchmarking—hardware, software, simulators, and models—within the context of a modular workflow for performance benchmarking of neuronal network simulations, providing researchers with structured protocols and reference data.

Core Dimensions of Benchmarking

Benchmarking experiments in neuronal network simulations are complex and multidimensional. The complexity can be decomposed into five main dimensions: "Hardware configuration," "Software configuration," "Simulators," "Models and parameters," and "Researcher communication" [7] [1]. This document focuses on the first four technical dimensions, which form the foundation of reproducible performance evaluation.

Table 1: Core Dimensions of Neuronal Network Simulation Benchmarking

| Dimension | Components | Considerations for Benchmarking |

|---|---|---|

| Hardware Configuration | Conventional HPC (CPU clusters), GPUs, Neuromorphic systems (e.g., SpiNNaker, BrainScaleS) [7] [1] [13] | Architecture, memory hierarchy, interconnect performance, power consumption [7] [13]. |

| Software Configuration | Operating system, compilers, numerical libraries, simulator version, Python/other interpreter versions [7] [1] | Software versions, compiler flags, environment variables, dependencies that impact performance [7]. |

| Simulators | NEST, Brian, GeNN, NEURON, Arbor, CARLsim [7] [1] [14] | Underlying algorithms (clock-driven vs. event-driven), support for neuron models, parallelism, and scalability [7] [14]. |

| Models and Parameters | Point neurons (e.g., LIF, Izhikevich) vs. morphologically detailed models; network scale and connectivity; synapse models [7] [1] [14] | Model complexity, network dynamics (balanced, chaotic), stationarity of activity, and numerical precision [7] [1]. |

Hardware Configuration

The choice of hardware platform significantly influences simulation performance and is a primary variable in benchmarking.

- Conventional HPC Systems: High-performance CPU clusters are widely used for large-scale network simulations. Benchmarks are often performed on contemporary supercomputers, and performance is assessed through strong-scaling (fixed model size, increasing resources) and weak-scaling (model size grows proportionally to resources) experiments [7]. Weak-scaling of neuronal networks can alter network dynamics, making strong-scaling more relevant for finding the limiting time-to-solution for a fixed model [7] [1].

- GPU Accelerators: GPU-based simulators like GeNN and NeuronGPU leverage massive parallelism for substantial speedups, with performance evaluated across different GPU tiers [7] [1].

- Neuromorphic Systems: Dedicated hardware like SpiNNaker (digital) and BrainScaleS (analog-mixed-signal) emulates neural networks with high energy efficiency, often targeting real-time operation [7] [13]. Benchmarking these requires consideration of physical acceleration factors and unique constraints [13].

Software Configuration

The software environment must be meticulously documented to ensure reproducibility, as performance can be sensitive to versions and configurations [7]. Key components include the operating system, compiler (e.g., GCC, NVCC) and its flags, MPI and CUDA versions, and numerical libraries (e.g., BLAS, LAPACK). The specific version of the simulator and its installation configuration are also critical [7] [15].

Simulators

Simulators are the core software engines for neuronal network simulations, each with distinct design goals, strengths, and performance characteristics.

Table 2: Selected Neuronal Network Simulators and Key Attributes

| Simulator | Primary Hardware Target | Simulation Strategy | Notable Features |

|---|---|---|---|

| NEST [7] [14] | CPU-based HPC clusters | Clock-driven, synchronous | Optimized for large-scale networks of point neurons; supports precise spike times. |

| Brian 2 [1] [16] | CPU, GPU (via code generation) | Clock-driven, synchronous | Intuitive, equation-oriented definition of models; high flexibility via runtime code generation. |

| GeNN [7] [1] [13] | GPU, CPU | Clock-driven, synchronous | Generics-based code generation for GPUs for accelerated simulation. |

| NEURON [7] [14] | CPU | Clock-driven, synchronous | Specializes in models with detailed morphology (multi-compartment neurons). |

| Arbor [7] | HPC systems (CPU/GPU) | Clock-driven, synchronous | A modern, performance-portable simulator for detailed neuron models on HPC systems. |

| SpiNNaker [7] [13] | Neuromorphic Hardware (SpiNNaker) | Asynchronous, event-based | Massively parallel, low-power system designed for real-time simulation. |

Two primary simulation strategies exist: synchronous (clock-driven) algorithms, where all neurons are updated simultaneously at discrete time steps, and asynchronous (event-driven) algorithms, where neurons are updated only when spikes are received or emitted [14]. Most large-scale simulators use clock-driven approaches for their simplicity and efficiency in handling large numbers of connections [14].

Models and Parameters

The choice of network model directly determines the computational load and is therefore a fundamental benchmark dimension.

- Neuron Models: Range from computationally simple Leaky Integrate-and-Fire (LIF) models, suitable for large-scale network simulations, to complex, biophysical Hodgkin-Huxley (HH) type models that simulate action potential generation in detail [7] [1] [14].

- Canonical Benchmark Networks: The most frequently used model for performance tests is the balanced random network [1]. A common variant is the HPC-benchmark model, which employs LIF neurons with alpha-shaped post-synaptic currents and spike-timing-dependent plasticity (STDP) to represent a scientifically relevant and computationally demanding workload [7] [1].

- Network Dynamics and Scaling: Model dynamics (e.g., balanced, chaotic) affect computational load, as non-stationary activity with transients can cause performance fluctuations [7]. When scaling a network model for benchmarking, it is crucial to note that simply increasing the size can change its dynamics, complicating the interpretation of weak-scaling results [7] [1].

Figure 1: The core dimensions of benchmarking neuronal network simulations, showing the hierarchy of key components within each dimension.

Experimental Protocols for Benchmarking

This section provides detailed methodologies for setting up and executing performance benchmarks.

Protocol: Strong and Weak Scaling Experiments

Objective: To measure the parallel scaling efficiency of a simulator on a given hardware platform. Background: Strong-scaling measures time-to-solution for a fixed network model while increasing computational resources. Weak-scaling increases the model size proportionally with resources, aiming to keep the workload per compute node constant [7].

- Model Selection: Choose a benchmark model, typically a balanced random network of LIF neurons with plastic synapses [7] [1].

- Baseline Setup:

- For strong-scaling, define the fixed model size (e.g., 100,000 neurons).

- For weak-scaling, define the model size per compute core/node (e.g., 10,000 neurons per node).

- Resource Scaling: Run the simulation across a range of compute nodes/cores (e.g., 1, 2, 4, 8, ..., 128 nodes).

- Execution:

- Use a batch system (e.g., Slurm) to schedule jobs.

- Execute the simulation for a defined biological time (e.g., 10 seconds of model time).

- Ensure the simulator is configured to output precise timing information for both the network construction (setup) and the state propagation (simulation) phases [7].

- Data Collection: Record for each run: number of nodes/cores, wall-clock time for setup and simulation, total simulated biological time, and any spike data for verification.

Protocol: Cross-Platform Performance and Energy Efficiency

Objective: To compare simulation performance and energy-to-solution across different hardware platforms and simulators. Background: Different simulators are optimized for different hardware, and energy efficiency is a key metric, especially for neuromorphic systems [13].

- Standardized Model: Implement the same network model (e.g., the HPC-benchmark model or a cortical microcircuit model [13]) on all target platforms using their respective interfaces (e.g., PyNN, native scripts).

- Fixed Workload: Run the simulation with identical parameters (simulated time, network size, etc.).

- Performance Measurement: Record the total wall-clock time-to-solution.

- Energy Measurement:

- If possible, use integrated power meters (e.g., via the HPC system's monitoring or hardware like a power meter for smaller systems).

- Record the total energy consumed in Joules. Specify whether the measurement includes only compute nodes or also interconnects and support hardware [7].

- Analysis: Calculate and compare the time-to-solution and energy-to-solution across the different platforms.

The Scientist's Toolkit

This section details essential tools and "research reagents" required for conducting rigorous benchmarking experiments.

Table 3: Essential Tools and Reagents for Benchmarking

| Category | Item | Function / Relevance |

|---|---|---|

| Benchmarking Frameworks | beNNch [12] [15] | A software framework for configuring, executing, and analyzing benchmarks in a unified, reproducible way. |

| SNABSuite [13] | A cross-platform benchmark suite for neuromorphic hardware and simulators. | |

| NeuroBench [17] | A community-developed benchmark framework for neuromorphic computing algorithms and systems. | |

| Reference Models | HPC-Benchmark Model [7] [1] | A balanced random network of LIF neurons with STDP; a standard for simulator performance tests. |

| Cortical Microcircuit Model [13] | A full-scale model used as a de-facto standard workload for comparing large-scale implementations. | |

| Rallpacks [18] | Early benchmarks for evaluating the speed and accuracy of simulators, particularly for single neuron models. | |

| Simulation Engines | NEST, Brian 2, GeNN, etc. (See Table 2) | Core simulation technology to be evaluated. |

| Analysis & Metrics | Wall-clock time | The primary measure for time-to-solution [7]. |

| Energy-to-solution | A critical measure for evaluating efficiency, especially on neuromorphic and edge devices [13]. | |

| Scaling efficiency | The ratio of ideal to observed performance in strong- and weak-scaling experiments. |

Robust benchmarking of neuronal network simulations is a multi-faceted endeavor that requires careful consideration of hardware, software, simulators, and models. Standardized protocols and frameworks like beNNch and SNABSuite are vital for ensuring reproducibility and meaningful comparisons. By adhering to structured workflows and documenting all dimensions of the benchmarking process, researchers can effectively guide the development of more efficient simulation technology, ultimately enabling more complex and scientifically ambitious brain models.

Within the context of a broader thesis on modular workflows for performance benchmarking in neuronal network simulations research, the precise definition and measurement of core performance metrics is paramount. The development of complex network models to explain brain function in health and disease relies on advancements in simulation technology, which in turn depends on rigorous benchmarking [7] [1]. Time-to-solution, energy-to-solution, and memory consumption represent the triad of key resources whose efficient use enables the construction of larger network models with extended explanatory scope and facilitates the study of long-term effects such as system-level learning [7]. This document provides detailed application notes and experimental protocols for the consistent measurement and reporting of these metrics, fostering comparability and reproducibility in computational neuroscience.

Metric Definitions and Significance

Efficiency in neuronal network simulations is measured by the resources required to achieve a scientific result [1]. The table below defines the core metrics and their scientific relevance for researchers and drug development professionals.

Table 1: Core Performance Metrics in Neuronal Network Simulations

| Metric | Formal Definition | Primary Significance | Secondary Significance |

|---|---|---|---|

| Time-to-Solution | Total wall-clock time required to complete a simulation, from network setup to the end of state propagation [7] [1]. | Determines the feasibility of simulating long-time-scale processes like learning and brain development [7]. | Enables real-time performance for robotics and closed-loop simulations; sub-real-time performance accelerates research cycles [7]. |

| Energy-to-Solution | Total energy consumed (often in Joules) by the hardware to complete a simulation [7] [1]. | Critical for developing sustainable HPC workflows and neuromorphic systems with hardware constraints [7] [19]. | Reveals trade-offs between computational speed and power consumption, impacting operational costs and hardware design [19]. |

| Memory Consumption | Peak physical memory (RAM) allocated during a simulation, including both model data and execution overhead [7]. | Dictates the maximum size and complexity of a network model that can be simulated on a given hardware system [7]. | Influences performance via memory bandwidth constraints and is a key design factor for in-memory compute architectures [7]. |

These metrics are not independent; optimizing one can directly impact the others. For instance, reducing time-to-solution often lowers energy-to-solution, while strategies to reduce memory footprint might increase computational time. Furthermore, the precise interpretation of these metrics depends on the specific simulation context. For time-to-solution, studies must distinguish between the setup phase (network construction) and the simulation phase (state propagation) [7]. For energy-to-solution, it is crucial to specify the measurement scope—whether it includes only compute nodes or also interconnects and support hardware [7].

Experimental Protocols for Metric Measurement

Protocol for Time-to-Solution Measurement

Objective: To reproducibly measure the wall-clock time required for the setup and execution of a neuronal network simulation. Materials: beNNch framework [7], HPC system or workstation, target simulator (e.g., NEST, NEURON, Brian, GeNN) [7] [1]. Methodology:

- Instrument the Simulation Code: Use high-resolution timers (e.g.,

std::chronoin C++ ortime.perf_counterin Python) to record timestamps.- Setup Phase: Start timer before network creation; stop after all neurons and synapses are instantiated.

- Simulation Phase: Start timer after setup; stop after the final simulation time step is complete.

- Execute Benchmarks: Run the simulation multiple times (minimum n=3) to account for system noise.

- Data Recording: Record the timing data and all relevant metadata, including simulator version, hardware specification, and network model parameters, using a standardized framework like beNNch [7]. Data Analysis: Calculate the mean and standard deviation of the total time-to-solution (setup + simulation) across runs. Report both the total and the phase-specific times.

Protocol for Energy-to-Solution Measurement

Objective: To quantify the total energy consumed by the hardware during a simulation. Materials: HPC system with integrated power meters (e.g., via IPMI or dedicated sensors), external power meter (for smaller systems), energy monitoring software (e.g., JURECA's power measurement infrastructure) [7]. Methodology:

- Select Measurement Scope: Define whether energy measurement includes compute nodes only or the entire supporting infrastructure [7].

- Establish Baseline: Measure the system's idle power consumption before launching the simulation.

- Monitor During Execution: Use the chosen measurement tool to sample power (in Watts) at a high frequency (e.g., 1 Hz) throughout the simulation's duration.

- Data Recording: Log power samples alongside simulation start and end timestamps.

Data Analysis: Integrate the power-over-time data to calculate total energy consumed in Joules (J):

Energy = Σ (Power_measured - Power_idle) * Time_sample_interval. Report the total energy and the average power.

Protocol for Memory Consumption Measurement

Objective: To measure the peak physical memory usage during a simulation.

Materials: HPC system, system monitoring tools (e.g., /proc/self/status on Linux, getrusage system call, or HPC cluster monitoring tools).

Methodology:

- Select Measurement Method: Instrument the simulator to periodically check memory usage or use an external tool to monitor the entire process.

- Sample Memory Usage: Track the resident set size (RSS) or equivalent metric at regular intervals (e.g., every second or every simulation time step).

- Data Recording: Log the memory usage samples. Data Analysis: Identify the peak memory usage value from all samples. Report this value in Gigabytes (GB).

A Modular Workflow for Integrated Benchmarking

A modular workflow is essential for managing the complexity of benchmarking studies, which involve multiple dimensions: hardware configuration, software configuration, simulators, and models [7]. The following workflow, implemented in tools like beNNch, decomposes the benchmarking process into standardized, reproducible segments [7].

Figure 1: Modular benchmarking workflow for neuronal network simulations.

Workflow Description:

- Module 1: Hardware Configuration: Document the precise HPC system, compute node specifications (CPU/GPU type, cores per node, memory), interconnect, and any power measurement capabilities [7].

- Module 2: Software Configuration: Record the operating system, compiler version, libraries, and the specific version of the simulator software being tested [7] [1].

- Module 3: Model & Parameters: Define the neuronal network model in a machine-readable format. This includes neuron and synapse models, connectivity rules, and all parameters. Using standardized models (e.g., the HPC-benchmark model [1]) facilitates comparison.

- Module 4: Simulator Execution: Run the simulation according to the protocols in Section 3, controlling for scaling type (strong-scaling vs. weak-scaling) [7].

- Module 5: Data & Metadata Collection: Systematically record all performance metrics (time, energy, memory) alongside the complete hardware, software, and model metadata. The beNNch framework is designed for this unified recording [7].

- Module 6: Analysis & Visualization: Analyze the collected data to generate performance profiles, scaling plots, and identify performance bottlenecks.

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential "research reagents"—software, models, and hardware—required for conducting performance benchmarking in neuronal network simulations.

Table 2: Essential Research Reagents for Performance Benchmarking

| Item Name | Type | Function in Benchmarking | Example Tools / Models |

|---|---|---|---|

| Simulation Engines | Software | Core technology for executing neuronal network models; different engines are optimized for different hardware and model types. | NEST [7], NEURON [1], Brian [7], GeNN [7], Arbor [1] |

| Benchmarking Framework | Software | Configures, executes, and analyzes benchmarks in a standardized way; ensures reproducible collection of data and metadata. | beNNch [7] |

| Standardized Network Models | Model | Provides a consistent, scientifically relevant workload for comparing simulator performance across studies and hardware. | "HPC-benchmark" model [1], balanced random networks [1], multi-area models [7] |

| HPC & Neuromorphic Hardware | Hardware | The physical platform for execution; performance is highly dependent on the architecture (CPU, GPU, neuromorphic). | HPC clusters (JUQUEEN, JURECA) [7], GPU nodes [7], SpiNNaker [7] |

| Performance Analysis Tools | Software | Measures low-level hardware counters, power consumption, and memory usage during simulation. | IPMI tools, Linux perf, getrusage, custom power monitoring software [7] |

| Visualization Tools | Software | Aids in the analysis and comparative evaluation of different Spiking Neural Network (SNN) models and their performance. | RAVSim v2.0 [20] |

The rigorous and standardized application of the metrics and protocols outlined in this document is critical for the advancement of simulation technology in computational neuroscience. By adopting a modular workflow that meticulously records data and metadata, researchers can ensure their benchmark results are reproducible, comparable, and meaningful. This disciplined approach directly supports the broader thesis of modular benchmarking by providing a concrete methodology for assessing performance, ultimately guiding the development of more efficient simulation technology and enabling ever more detailed and predictive models of brain function.

In the field of computational neuroscience, the development of large-scale neuronal network models is essential for understanding brain function and dysfunction. The pursuit of this goal is tightly coupled with advancements in high-performance computing (HPC). As researchers strive to simulate ever-larger networks—from models representing specific brain regions to entire brains—the efficiency of the simulation software becomes paramount [7] [1]. Performance benchmarking is therefore a critical practice, providing the data needed to optimize simulation engines, guide resource allocation, and ultimately enable novel scientific research that would otherwise be computationally intractable [7]. Within this benchmarking process, scaling analysis forms the cornerstone, quantifying how a simulation's performance changes as computational resources are varied. This document details the core paradigms of scaling analysis—strong scaling and weak scaling—within the context of a modular workflow for benchmarking neuronal network simulations, providing application notes and experimental protocols for researchers.

Conceptual Foundations: Strong and Weak Scaling

Scalability, in the context of HPC, refers to the ability of hardware and software to deliver greater computational power when the amount of resources is increased [21] [22]. For software, this is often measured as parallelization efficiency. The fundamental metric for both scaling types is speedup, defined as:

Speedup = t(1) / t(N)

where t(1) is the computational time using one processor, and t(N) is the time using N processors [21] [22]. The two scaling paradigms differ in how the problem size is treated during this measurement.

Strong Scaling and Amdahl's Law

Strong scaling measures how the solution time varies with the number of processors for a fixed total problem size [21] [23]. The objective is to reduce the execution time of a fixed workload by adding more computational resources [22].

This paradigm is governed by Amdahl's Law, which posits that the maximum speedup is limited by the serial (non-parallelizable) fraction of the code. Amdahl's Law is formulated as:

Speedup = 1 / (s + p / N)

Here, s is the proportion of time spent on the serial part, p is the proportion of time spent on the parallelizable part (s + p = 1), and N is the number of processors [21] [22]. As N approaches infinity, the maximum possible speedup converges to 1/s, creating a hard ceiling on performance improvements for a fixed problem [21]. Strong scaling is particularly relevant for finding the "sweet spot" that allows a computation to complete in a reasonable amount of time without wasting too many cycles to parallel overhead [22]. It is most often applied to long-running, CPU-bound applications [22].

Weak Scaling and Gustafson's Law

Weak scaling assesses how the solution time changes when both the problem size and the number of processors are increased proportionally [21] [23]. The goal is to maintain a constant execution time per unit of work while handling a larger overall problem [23].

This paradigm is described by Gustafson's Law, which provides a formula for scaled speedup:

Scaled Speedup = s + p × N

The variables s, p, and N have the same meanings as in Amdahl's Law [21]. In contrast to Amdahl's Law, Gustafson's Law suggests that if the serial fraction does not increase with the problem size, the scaled speedup can increase linearly with the number of processors, with no theoretical upper limit [21]. Weak scaling is ideally suited for large, memory-bound applications where the required memory cannot be satisfied by a single node, allowing researchers to solve progressively larger problems [22].

Conceptual Workflow for Scaling Analysis

The following diagram illustrates the core logical relationship and decision process between the strong and weak scaling paradigms.

Application in Neuronal Network Simulation

For neuronal network simulations, the choice between strong and weak scaling depends on the scientific goal. Strong-scaling experiments are highly relevant for finding the limiting time-to-solution for a network model of a given, natural size [7] [1]. This is crucial when seeking to reduce the wall-clock time for long-running simulations, such as those studying learning or development. In contrast, weak-scaling experiments are employed when the aim is to scale up the network model itself—for instance, from a model of a single cortical column to a multi-area model of the entire cortex—in proportion to the available computational resources [7] [1].

A significant challenge in weak scaling for neuroscience is that scaling neuronal networks inevitably leads to changes in network dynamics, making comparisons of results obtained at different scales problematic [7] [1]. Therefore, strong scaling is often the preferred method for benchmarking the pure computational efficiency of a simulator on a scientifically relevant model size [7].

Table: Comparison of Scaling Paradigms for Neuronal Network Simulations

| Aspect | Strong Scaling | Weak Scaling |

|---|---|---|

| Problem Size | Fixed total problem (e.g., fixed number of neurons and synapses) [23] | Increases proportionally with processors (e.g., neurons per core is fixed) [23] |

| Primary Objective | Reduce time-to-solution for a specific model [22] [23] | Solve larger, more complex network models [22] |

| Governing Law | Amdahl's Law [21] | Gustafson's Law [21] |

| Performance Metric | Speedup for a fixed workload [23] | Efficiency in maintaining constant time per unit work [22] |

| Ideal Outcome | Linear reduction in time with added resources | Constant execution time with scaled-up problem size |

| Key Limitation | Serial fraction imposes a hard speedup limit [21] | Changing network dynamics with scale complicates scientific comparison [7] |

Experimental Protocols for Scaling Experiments

This section outlines a standardized, modular protocol for executing and analyzing scaling experiments, aligning with a reproducible benchmarking workflow [7].

General Benchmarking Workflow

A modular workflow decomposes the benchmarking endeavor into distinct segments to manage its complexity and foster reproducibility [7]. The diagram below outlines the key stages, from initial configuration to final analysis.

Protocol for Strong Scaling Experiments

Objective: To determine the reduction in time-to-solution for a fixed neuronal network model as the number of processing elements (cores/threads/MPI processes) is increased.

Model Configuration:

- Select a scientifically relevant neuronal network model. A common choice is a balanced random network of leaky integrate-and-fire (LIF) neurons with spike-timing-dependent plasticity (STDP) [1].

- Fix all model parameters, including the number of neurons, synaptic connectivity rules, and simulation duration. The total problem size must remain constant.

Resource Scaling:

- Start the experiment with a single processing element (or the minimum number required to run the model).

- Increase the number of processing elements in steps. It is advisable to use power-of-2 increments (e.g., 1, 2, 4, 8, 16, ...) up to the maximum available on the system [22].

- For each resource count, execute the simulation and record the wall-clock time for the simulation phase (excluding setup time, if measured separately) [7].

Data Collection and Analysis:

- Perform multiple independent runs (e.g., 3-5) for each resource count to account for system noise and variability. Average the results and remove outliers [22].

- For each run, calculate the strong speedup: Speedup(N) = t(1) / t(N).

- Plot the measured speedup and the ideal linear speedup against the number of processing elements.

- Fit the data to Amdahl's Law to estimate the serial fraction s of the code [21].

Table: Example Strong Scaling Results for a Julia Set Generator (Fixed Problem Size)

| Height (pixels) | Width (pixels) | Number of Threads | Time (sec) [21] |

|---|---|---|---|

| 10000 | 2000 | 1 | 3.932 |

| 10000 | 2000 | 2 | 2.006 |

| 10000 | 2000 | 4 | 1.088 |

| 10000 | 2000 | 8 | 0.613 |

| 10000 | 2000 | 12 | 0.441 |

| 10000 | 2000 | 16 | 0.352 |

| 10000 | 2000 | 24 | 0.262 |

Protocol for Weak Scaling Experiments

Objective: To assess the ability to maintain a constant execution time per unit of work when the network model size and computational resources are scaled up proportionally.

Model Configuration:

- Select a baseline neuronal network model.

- Define the "unit of work." In neuronal network simulations, this is often the number of neurons simulated per processing element (e.g., 10,000 neurons per core) [24].

Problem and Resource Scaling:

- Start with the baseline model size on a single processing element (or a small base number).

- Increase the number of processing elements (e.g., 1, 2, 4, 8, ...).

- For each increase in resources, scale the total network size proportionally. For example, if the baseline is 10,000 neurons on 1 core, then 20,000 neurons on 2 cores, 40,000 neurons on 4 cores, and so on [21].

- Ensure other parameters, such as synaptic density (connections per neuron), remain constant.

Data Collection and Analysis:

- Perform multiple independent runs for each (problem size, resource count) configuration [22].

- For each run, record the wall-clock time.

- Calculate the weak scaling efficiency: Efficiency(N) = t(1) / t(N). Perfect weak scaling is indicated by an efficiency close to 1.0 (or 100%), meaning the time remained constant as the problem scaled [22].

- Plot the execution time and efficiency against the number of processing elements.

- Fit the data to Gustafson's Law to derive the scaled speedup [21].

Table: Example Weak Scaling Results for a Julia Set Generator (Scaled Problem Size)

| Height (pixels) | Width (pixels) | Number of Threads | Time (sec) [21] |

|---|---|---|---|

| 10000 | 2000 | 1 | 3.940 |

| 20000 | 2000 | 2 | 3.874 |

| 40000 | 2000 | 4 | 3.977 |

| 80000 | 2000 | 8 | 4.258 |

| 120000 | 2000 | 12 | 4.335 |

| 160000 | 2000 | 16 | 4.324 |

| 240000 | 2000 | 24 | 4.378 |

Benchmarking neuronal network simulations requires a combination of specialized software, hardware, and model specifications. The following table details key "research reagents" for this field.

Table: Essential Materials for Neuronal Network Performance Benchmarking

| Category | Item | Function / Relevance |

|---|---|---|

| Simulation Software | NEST [7] [1] | A primary simulator for large-scale networks of point neurons; commonly used in scaling studies. |

| NEURON, Arbor [7] [1] | Simulators focused on networks of morphologically detailed neurons. | |

| GeNN, NeuronGPU [7] [1] | GPU-accelerated simulators for spiking neural networks. | |

| Benchmarking Framework | beNNch [7] [1] | An open-source framework for configuration, execution, and analysis of benchmarks; promotes reproducibility. |

| Reference Network Models | HPC-benchmark Model [1] | A standard model based on balanced random networks with LIF neurons and STDP, used for upscaling demonstrations. |

| Brunel-type Models [1] | A class of balanced random network models with defined asynchronous and synchronous states. | |

| Computational Resources | HPC Clusters [7] [1] | Provide the distributed computational power necessary for large-scale strong and weak scaling experiments. |

| Data Collection & Analysis | Wall-clock Time [22] | The primary performance metric, measured as time-to-solution. |

| Performance Counters | Tools to measure hardware-specific metrics (e.g., FLOPs, memory bandwidth) for deep performance analysis. |

Implementing a Modular Benchmarking Workflow: From Theory to Practice

Modern computational neuroscience strives to develop complex network models to explain brain dynamics in health and disease. The development of state-of-the-art simulation engines relies critically on benchmark simulations that assess time-to-solution for scientifically relevant network models across various hardware and software configurations [7] [1]. However, maintaining comparability of these benchmark results is notoriously difficult due to a lack of standardized specifications for measuring simulator performance on high-performance computing (HPC) systems [7]. The benchmarking endeavor is inherently complex, encompassing five main dimensions: "Hardware configuration," "Software configuration," "Simulators," "Models and parameters," and "Researcher communication" [7]. Motivated by this challenge, a generic modular workflow was defined to decompose the process into unique segments, leading to the development of the open-source framework beNNch [7]. This framework records benchmarking data and metadata in a unified way to foster reproducibility, guiding development toward more efficient simulation technology. This Application Note details the conceptual framework and provides explicit protocols for its implementation.

The Modular Workflow Architecture

The modular workflow conceptualizes the benchmarking process as a series of discrete, interconnected stages. This decomposition standardizes the procedure, enhances reproducibility, and allows individual components to be developed or updated independently. The entire process, from defining the experiment to analyzing the results, is encapsulated within a structured pathway.

The following diagram illustrates the logical flow and dependencies between the core modules of the benchmarking workflow:

Core Modules and Experimental Protocols

This section provides a detailed breakdown of each module in the conceptual workflow, including the specific experimental protocols for implementing performance benchmarks.

Module 1: Configuration

The configuration module establishes the foundation of the benchmark, defining the "what" and "where" of the experiment.

Protocol 1.1: Model Selection and Parameterization

- Objective: To select a scientifically relevant network model that tests specific simulator capabilities.

- Procedure:

- Choose a Benchmark Model: Frequently used models include the HPC-benchmark model with leaky integrate-and-fire (LIF) neurons and spike-timing-dependent plasticity (STDP) [7] [1], or balanced random networks [7] [1] inspired by cortical dynamics.

- Define Network Scale: Determine the network size (number of neurons and synapses). For weak-scaling experiments, plan to scale the network size proportionally with computational resources. For strong-scaling experiments, keep the model size fixed while increasing resources [7] [1].

- Parameterize the Model: Specify all neuron model parameters, synapse model parameters, and connectivity rules. Using standardized model descriptions, like those provided in supplementary materials of existing studies [7], is critical for comparability.

Protocol 1.2: Hardware and Software Environment Setup

- Objective: To configure the hardware and software environment for reproducible benchmark execution.

- Procedure:

- Hardware Specification: Document the HPC system, compute node architecture, number of cores per node, amount of memory, and interconnect type [7].

- Software Environment: Define the simulator version (e.g., NEST, NEURON, GeNN) [7] [2] [25], compiler version, MPI library, and operating system. Using container technologies (e.g., Docker, Singularity) is recommended to ensure a consistent environment.

Module 2: Execution

This module involves the automated running of the benchmark simulations based on the configurations defined in Module 1.

- Protocol 2.1: Running Scaling Experiments

- Objective: To measure simulator performance as a function of computational resources.

- Procedure:

- Job Submission: Launch simulation jobs on the HPC system using a workload manager (e.g., SLURM). The beNNch framework can automate this process [7].

- Scaling Sweep: For a strong-scaling experiment, run the same model on 1, 2, 4, 8, ..., up to a maximum number of cores or nodes. For a weak-scaling experiment, increase the model size in proportion to the number of cores [7].

- Replication: Execute each configuration multiple times to account for performance variability inherent in HPC systems.

Module 3: Data Collection

This module focuses on the systematic recording of performance data and all associated metadata.

- Protocol 3.1: Performance and Metadata Recording

- Objective: To collect all data necessary for analyzing performance and reproducing the benchmark.

- Procedure:

- Record Performance Metrics: For each simulation run, log the time-to-solution, broken down into network setup time and simulation time [7]. Optionally, measure memory consumption and energy-to-solution [7] [1].

- Capture Metadata: Automatically record all metadata, including the complete software environment, hardware specifications, model parameters, and benchmark launch parameters [7]. This is essential for reproducibility.

Module 4: Analysis

The final module transforms the raw collected data into interpretable results and insights.

- Protocol 4.1: Performance Analysis and Visualization

- Objective: To evaluate the efficiency and scaling performance of the simulator.

- Procedure:

- Calculate Speedup and Efficiency: For strong-scaling experiments, calculate the parallel speedup and efficiency. For weak-scaling, calculate the efficiency relative to the baseline run [7].

- Identify Bottlenecks: Analyze the breakdown of simulation time to identify performance bottlenecks, such as communication overhead or load imbalance [7].

- Generate Figures: Create standard plots, such as execution time vs. number of cores and scaling efficiency plots.

Quantitative Benchmarking Data

The following tables summarize key metrics and model parameters relevant to benchmarking neuronal network simulators.

Table 1: Key Performance Metrics for Neuronal Network Simulation Benchmarks [7] [1]

| Metric | Description | Measurement Goal |

|---|---|---|

| Time-to-Solution | Total wall-clock time to complete a simulation. | Measure raw simulation speed. |

| Setup Time | Time spent constructing the network and creating connections. | Identify I/O and network creation bottlenecks. |

| Simulation Time | Time spent propagating the model state and processing spikes. | Assess core simulation engine performance. |

| Energy-to-Solution | Total energy consumed (Joules) to complete a simulation. | Evaluate power efficiency, crucial for neuromorphic systems [1]. |

| Memory Consumption | Peak memory used during simulation. | Determine hardware requirements for large-scale models. |

| Scaling Efficiency | Parallel efficiency (strong-scaling) or scaled speedup (weak-scaling). | Quantify how well the simulator utilizes parallel resources. |

Table 2: Example Parameters for a Benchmark Network Model (based on the HPC-benchmark model) [7] [1]

| Parameter | Value / Type | Description |

|---|---|---|

| Neuron Model | Leaky Integrate-and-Fire (LIF) | A simple, computationally efficient point neuron model. |

| Synapse Model | Alpha-shaped postsynaptic currents, STDP | Models synaptic dynamics and plasticity. |

| Network Size | Scalable (e.g., 10^4 - 10^8 neurons) | Determines computational load. |

| Excitatory:Inhibitory Ratio | 4:1 (e.g., 80% excitatory, 20% inhibitory) | Mimics the balance found in cortical networks. |

| Connectivity | Random, sparse (e.g., 10^3 - 10^4 connections per neuron) | Defines the network structure and memory footprint. |

The Scientist's Toolkit: Essential Research Reagents

Successful implementation of the modular benchmarking workflow requires a suite of software tools and resources.

Table 3: Key Research Reagent Solutions for Neuronal Network Benchmarking

| Item | Function in Benchmarking | Reference |

|---|---|---|

| beNNch Framework | Open-source software for configuring, executing, and analyzing benchmarks in a unified and reproducible way. | [7] |

| NEST Simulator | A primary simulator for large-scale spiking network models; often used as a reference in benchmark studies. | [7] [2] [25] |